Security experts are accustomed to direct attacks, but some of today’s more insidious incursions succeed in a roundabout way — by planting malware at sites deemed most likely to be visited by the targets of interest. New research suggests these so-called “watering hole” tactics recently have been used as stepping stones to conduct espionage attacks against a host of targets across a variety of industries, including the defense, government, academia, financial services, healthcare and utilities sectors.

Espionage attackers increasingly are setting traps at “watering hole” sites, those frequented by individuals and organizations being targeted.

Some of the earliest details of this trend came in late July 2012 from RSA FirstWatch, which warned of an increasingly common attack technique involving the compromise of legitimate websites specific to a geographic area which the attacker believes will be visited by end users who belong to the organization they wish to penetrate.

At the time, RSA declined to individually name the Web sites used in the attack. But the company shifted course somewhat after researchers from Symantec this month published their own report on the trend (see The Elderwood Project). Taken together, the body of evidence supports multiple, strong connections between these recent watering hole attacks and the Aurora intrusions perpetrated in late 2009 against Google and a number of other high-profile targets.

In a report released today, RSA’s experts hint at — but don’t explicitly name — some of the watering hole sites. Rather, the report redacts the full URLs of the hacked sites that were redirecting to exploit sites in this campaign. However, through Google and its propensity to cache content, we can see firsthand the names of the sites that were compromised in this campaign.

According to RSA, one of the key watering hole sites was “a website of enthusiasts of a lesser known sport,” hxxp://xxxxxxxcurling.com. Later in the paper, RSA lists some of the individual pages at this mystery sporting domain that were involved in the attack (e..g, http://www.xxxxxxxcurling.com/Results/cx/magma/iframe.js). As it happens, running a search on any of these pages turns up a number recent visitor logs for this site — torontocurling.com. Google cached several of the access logs from this site during the time of the compromise cited in RSA’s paper, and those logs help to fill in the blanks intentionally left by RSA’s research team, or more likely, the lawyers at RSA parent EMC Corp. (those access logs also contain interesting clues about potential victims of this attack as well).

From cached copies of dozens of torontocurling.com access logs, we can see the full URLs of some of the watering hole sites used in this campaign:

- http://cartercenter.org

- http://princegeorgescountymd.gov

- http://rocklandtrust.com (Massachusetts Bank)

- http://ndi.org (National Democratic Institute)

- http://www.rferl.org (Radio Free Europe / Radio Liberty)

According to RSA, the sites in question were hacked between June and July 2012 and were silently redirecting visitors to exploit pages on torontocurling.com. Among the exploits served by the latter include a then-unpatched zero-day vulnerability in Microsoft Windows (XML Core Services/CVE-2012-1889). In that attack, the hacked sites foisted a Trojan horse program named “VPTray.exe” (made to disguise itself as an update from Symantec, which uses the same name for one of its program components).

RSA said the second phase of the watering hole attack — from July 16-18th, 2012 — used the same infrastructure but a different exploit – a Java vulnerability (CVE-2012-1723) that Oracle had patched less than a month prior.

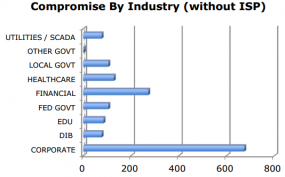

The compromise of these sites likely led to the Trojanization of many high-profile targets, nearly 4,000 in all, RSA concluded.

“Based on our analysis, a total of 32,160 unique hosts, representing 731 unique global organizations, were redirected from compromised web servers injected with the redirect iframe to the exploit server,” the company said. “Of these redirects, 3,934 hosts were seen to download the exploit CAB and JAR files (indicating a successful exploit of the visiting host). This gives a ‘success’ statistic of 12%, which based on our previous understanding of exploit campaigns, indicates a very successful campaign.

The takeaway from this research is most clearly stated by Symantec in its Elderwood report (PDF): “Any manufacturers who are in the defense supply chain need to be wary of attacks emanating from subsidiaries, business partners, and associated companies, as they may have been compromised and used as a stepping-stone to the true intended target. Companies and individuals should prepare themselves for a new round of attacks in 2013. This is particularly the case for companies who have been compromised in the past and managed to evict the attackers. The knowledge that the attackers gained in their previous compromise will assist them in any future attacks.”

Brian, thanks for alerting us to this. I hope you’re not going to be the next watering hole, but just in case I hope you don’t mind that I keep NoScript on for your site.

Quoted in the article:

“Any manufacturers who are in the defense supply chain need to be wary of attacks emanating from subsidiaries, business partners, and associated companies, as they may have been compromised and used as a stepping-stone to the true intended target

So, no more “trusted” web sites (see http://noscript.net/ ). How about using the term “essential” web sites. At least whitelisting essential web sites in the browser will provide protection if a user is redirected to another web site controlled by the miscreants that is serving the exploits.

But, what if the miscreants are serving exploits directly from an essential web site? What’s Plan B? Especially considering that the exploits, in many cases, are zero-day exploits (sometimes kernel exploits).

In the current security climate, BYOD is a disaster waiting to happen. As is careless social networking. And employees using their home computers for work-related activities, on evenings and weekends, is also dangerous. Not to mention employees home networks (e.g., WEP, default router passwords left in place). If one tosses in home computing, the list of watering holes is much larger than those in the above quote.

This is why I was so disappointed in Windows 8, along with linux and many other ‘modern’ OS. There has to be a better way to make it so corrupting a system permanently is much harder, corrupting it even for a session is much harder, and detecting and preventing these problems is easier.

Java and Flash simply need to go away, they have proven time and again that they cannot create a product that will work with any trust. BYOD is also a mess as it is very difficult even with encryption and other protections to do anything about a simple keylogger. Microsoft should be slapped, how many years now has their browser been key to screwing up the operating system?

As much as I really like to step on microsoft’s toes I guess there’s no excuse for any company who fails to compete in cybersecurity after the publication of ‘The NIST Handbook to Computer Security’ in 1995:

http://csrc.nist.gov/publications/nistpubs/800-12/800-12-html/

Sandbox your casual surfing to a VM. Sandbox your “business essential” surfing to another VM. Internal surfing can be on your native OS (or if you’re really paranoid, sandbox it to a VM as well). Then not only does an exploit have to take over the Browser/OS, it has to break out of the VM as well. Use snapshots to revert the VM each time you power it on (create a new snapshot after patching).

Another way to do this for a business environment is with Terminal Services/Citrix/VDI. Have systems that can be used for casual surfing and another set for “business essential” with whitelists of where they can go. When the user logs off, everything is reset (except the profile bookmarks).

Yeah I could do that but that only limits the malware I get, doesn’t stop the spread or make it categorically harder to accomplish. Microsoft could have easily designed windows 8 to do these things at a core level of its OS, incorporating application whitelisting, VM, and sandboxing without proprietary issues or the usual windows incompatibilities with 3rd party solutions. They could easily make a way to actually roll back the entire OS in perfect fidelity to a date instead of just porting over some settings with their system restore – this would take more disk space but would be a small price to pay for a greatly reduced threat across the board from malware.

“Sandbox your casual surfing to a VM. Sandbox your “business essential” surfing to another VM.

You’ve just described Qubes OS. Qubes currently (version 1.0) runs modified Fedora AppVMs, but Windows AppVM’s are in the works. Just be sure to keep the AppVM template clean. Qubes’ target audience is the enterprise.

Similarly, with VDI, one must keep the desktop image(s) clean. In addition, the desktop image refresh frequency should be high enough to keep the miscreants at bay (think of Sisyphus).

Presumably, a knowledgeable and experienced sysadmin would have a much better chance of keeping the template/image clean than would ordinary enterprise end users keeping their own desktops clean.

P.S. Faronics also has an interesting solution with their Anti-Executable and Deep Freeze products. AE makes it difficult for the miscreants to get a foot in the door during desktop use, while DF provides reboot to restore. A certain amount of Windows hardening would add to AE’s effectiveness during desktop use.

JasonR wrote: “Sandbox your casual surfing to a VM.”

Won’t that lose you things like logs and browser histories? Wouldn’t it be better ti partition your drive into system & non-system areas. The system area you perioidcallt blow away, whether via a sanbox VM or some other way and the non-system areas you keep. The latter would contain your user directories, lo directories, etc

One has to wonder if the internet for all uses, including Gov., Commercial, Private etc is going to become a No-Go-Zone, as the amount of malicious activity makes the net as a system unworkable.

Can any-one guarrantee that a net connected device is uncompromised..even now?

It’s pretty hard to prove any particular machine is compromised, but there are plenty that are known to be compromised that are still allowed access to the internet. If you’re going to hack a high profile website, you need all those other compromised machines to cover your tracks. There’s no computer too unimportant to protect. We don’t have to make it so friggin’ easy for them.

So they disconnected all the links between potentially compromised networks. Well and good. How can they be sure that the malware hasn’t already spread over those links?

Or recreating the links

It is disgusting how you get your private&confidential informations. You ruin lives of some people with that.

Just by using NoScript one should be able to prevent this kind of attack. However, JasonR does have some good ideas for security but you have to assume that not everybody who is having these issues is going to have any skills with a computer (yes a VM is really easy to set up but still, I always think back to my parents when thinking average technical skill)

Along with that you have to remember that a VM runs a lot slower than if you were to run you browser session natively from your current OS and with many older computers, which may be neccesary to be used for a variety of reasons, can’t always run them at a reasonable speed.

Using VMs and other measures may safeguard your computer, but that won’t help your security in other sreas. For example, if the hackers put a keylogger in your system, blowing that system away may remove the malware but by then it may be too late. Not only may the hackers have grabbed some of your passwords, by blowing the system away you yourself may be unaware that they have done so…until the hackers start infiltrating other, less protected systems using YOUR passwords.

I may be biased about it and I know I don’t know all there is to know about security, but wouldn’t using OpenVMS solve some of the problems with servers getting infected? Of course, the apps need to be written so that they can run on OpenVMS systems, but none of the applications I supported when I was supporting VMS servers ever got infected.

If you used a VDI front-end to run OpenVMS terminal emulation (think VT220), it might be a more pleasant experience.

Like I said, I don’t know if it’s viable. But I leave it to the group to discuss.

Thanks for reading.