Virtually all compilers — programs that transform human-readable source code into computer-executable machine code — are vulnerable to an insidious attack in which an adversary can introduce targeted vulnerabilities into any software without being detected, new research released today warns. The vulnerability disclosure was coordinated with multiple organizations, some of whom are now releasing updates to address the security weakness.

Researchers with the University of Cambridge discovered a bug that affects most computer code compilers and many software development environments. At issue is a component of the digital text encoding standard Unicode, which allows computers to exchange information regardless of the language used. Unicode currently defines more than 143,000 characters across 154 different language scripts (in addition to many non-script character sets, such as emojis).

Specifically, the weakness involves Unicode’s bi-directional or “Bidi” algorithm, which handles displaying text that includes mixed scripts with different display orders, such as Arabic — which is read right to left — and English (left to right).

But computer systems need to have a deterministic way of resolving conflicting directionality in text. Enter the “Bidi override,” which can be used to make left-to-right text read right-to-left, and vice versa.

“In some scenarios, the default ordering set by the Bidi Algorithm may not be sufficient,” the Cambridge researchers wrote. “For these cases, Bidi override control characters enable switching the display ordering of groups of characters.”

Bidi overrides enable even single-script characters to be displayed in an order different from their logical encoding. As the researchers point out, this fact has previously been exploited to disguise the file extensions of malware disseminated via email.

Here’s the problem: Most programming languages let you put these Bidi overrides in comments and strings. This is bad because most programming languages allow comments within which all text — including control characters — is ignored by compilers and interpreters. Also, it’s bad because most programming languages allow string literals that may contain arbitrary characters, including control characters.

“So you can use them in source code that appears innocuous to a human reviewer [that] can actually do something nasty,” said Ross Anderson, a professor of computer security at Cambridge and co-author of the research. “That’s bad news for projects like Linux and Webkit that accept contributions from random people, subject them to manual review, then incorporate them into critical code. This vulnerability is, as far as I know, the first one to affect almost everything.”

The research paper, which dubbed the vulnerability “Trojan Source,” notes that while both comments and strings will have syntax-specific semantics indicating their start and end, these bounds are not respected by Bidi overrides. From the paper:

“Therefore, by placing Bidi override characters exclusively within comments and strings, we can smuggle them into source code in a manner that most compilers will accept. Our key insight is that we can reorder source code characters in such a way that the resulting display order also represents syntactically valid source code.”

“Bringing all this together, we arrive at a novel supply-chain attack on source code. By injecting Unicode Bidi override characters into comments and strings, an adversary can produce syntactically-valid source code in most modern languages for which the display order of characters presents logic that diverges from the real logic. In effect, we anagram program A into program B.”

Anderson said such an attack could be challenging for a human code reviewer to detect, as the rendered source code looks perfectly acceptable.

“If the change in logic is subtle enough to go undetected in subsequent testing, an adversary could introduce targeted vulnerabilities without being detected,” he said.

Equally concerning is that Bidi override characters persist through the copy-and-paste functions on most modern browsers, editors, and operating systems.

“Any developer who copies code from an untrusted source into a protected code base may inadvertently introduce an invisible vulnerability,” Anderson told KrebsOnSecurity. “Such code copying is a significant source of real-world security exploits.”

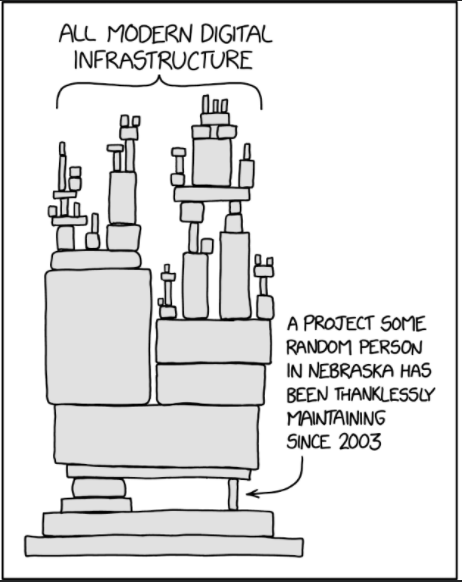

Image: XKCD.com/2347/

Matthew Green, an associate professor at the Johns Hopkins Information Security Institute, said the Cambridge research clearly shows that most compilers can be tricked with Unicode into processing code in a different way than a reader would expect it to be processed.

“Before reading this paper, the idea that Unicode could be exploited in some way wouldn’t have surprised me,” Green told KrebsOnSecurity. “What does surprise me is how many compilers will happily parse Unicode without any defenses, and how effective their right-to-left encoding technique is at sneaking code into codebases. That’s a really clever trick I didn’t even know was possible. Yikes.”

Green said the good news is that the researchers conducted a widespread vulnerability scan, but were unable to find evidence that anyone was exploiting this. Yet.

“The bad news is that there were no defenses to it, and now that people know about it they might start exploiting it,” Green said. “Hopefully compiler and code editor developers will patch this quickly! But since some people don’t update their development tools regularly there will be some risk for a while at least.”

Nicholas Weaver, a lecturer at the computer science department at University of California, Berkeley, said the Cambridge research presents “a very simple, elegant set of attacks that could make supply chain attacks much, much worse.”

“It is already hard for humans to tell ‘this is OK’ from ‘this is evil’ in source code,” Weaver said. “With this attack, you can use the shift in directionality to change how things render with comments and strings so that, for example ‘This is okay” is how it renders, but ‘This is’ okay is how it exists in the code. This fortunately has a very easy signature to scan for, so compilers can [detect] it if they encounter it in the future.”

The latter half of the Cambridge paper is a fascinating case study on the complexities of orchestrating vulnerability disclosure with so many affected programming languages and software firms. The researchers said they offered a 99-day embargo period following their initial disclosure to allow affected products to be repaired with software updates.

“We met a variety of responses ranging from patching commitments and bug bounties to quick dismissal and references to legal policies,” the researchers wrote. “Of the nineteen software suppliers with whom we engaged, seven used an outsourced platform for receiving vulnerability disclosures, six had dedicated web portals for vulnerability disclosures, four accepted disclosures via PGP-encrypted email, and two accepted disclosures only via non-PGP email. They all confirmed receipt of our disclosure, and ultimately nine of them committed to releasing a patch.”

Eleven of the recipients had bug bounty programs offering payment for vulnerability disclosures. But of these, only five paid bounties, with an average payment of $2,246 and a range of $4,475, the researchers reported.

Anderson said so far about half of the organizations maintaining the affected computer programming languages contacted have promised patches. Others are dragging their feet.

“We’ll monitor their deployment over the next few days,” Anderson said. “We also expect action from Github, Gitlab and Atlassian, so their tools should detect attacks on code in languages that still lack bidi character filtering.”

As for what needs to be done about Trojan Source, the researchers urge governments and firms that rely on critical software to identify their suppliers’ posture, exert pressure on them to implement adequate defenses, and ensure that any gaps are covered by controls elsewhere in their toolchain.

“The fact that the Trojan Source vulnerability affects almost all computer languages makes it a rare opportunity for a system-wide and ecologically valid cross-platform and cross-vendor comparison of responses,” the paper concludes. “As powerful supply-chain attacks can be launched easily using these techniques, it is essential for organizations that participate in a software supply chain to implement defenses.”

Weaver called the research “really good work at stopping something before it becomes a problem.”

“The coordinated disclosure lessons are an excellent study in what it takes to fix these problems,” he said. “The vulnerability is real but also highlights the even larger vulnerability of the shifting stand of dependencies and packages that our modern code relies on.”

Rust has released a security advisory for this security weakness, which is being tracked as CVE-2021-42574 and CVE-2021-42694. Additional security advisories from other affected languages will be added as updates here.

The Trojan Source research paper is available here (PDF).

These Western researchers are whistling in the dark if they think our adversaries haven’t already discovered this vulnerability. As I’ve always said over the years, “It’s over folks, we just don’t know it yet”

If “Eastern” adversaries have already discovered this vulnerability (seemingly by default on account of their nefarious intelligence), why hasn’t it been exploited in a major way yet? Did the researchers at Cambridge miss the exploits? Or fail to tell the world about them?

They take time to develop and also to discover so why do you expect them all to be known now?

Because they’re in it for the money. I don’t know enough to comment on their skill and thoroughness, but have watched online crime for many years. The crooks make their money by discovering flaws/vulnerabilities and exploiting them before defenders of security find out about it. In an evnironment as fluid and rapidly changing as online security, it’s unlikely that the aggressors wouldn’t move quickly to exploit a major weakness. If any expert is actually reading this and can address this little debate, it would be appreciated.

“The crooks” vs “the defenders of security”. When state intelligence agencies like the NSA and others stockpile zero-day exploits without telling Microsoft at el. about any of them, making all of us less safe, which bucket would you say that falls in?

That’s not how it works, sir. No serious adversary uses it for cheap stunts or ransom or whatever. The serious adversary holds all its cards until it means something, like, say, the invasion of Taiwan, or a conflict in the South China Sea results in Navel losses or something. THEN use it; Blink, there goes the GPS constellation and all the systems that rely on the GPS clock; Blink, there goes the internet (selectively); Blink, there go all the satellites. The script is probably already written, awaiting authorization from Premier Gung Pootie Chung to hit the ‘Enter’ button.

Stop relying on computers for intelligence. They are unreliable. Simple as that.

Computers are unreliable compared to what? People?

Little Bobby Drop Tables grew up.

https://xkcd.com/327/

https://xkcd.com/1137/

Isn’t this just an extension of Ken Thompson’s paper on Trust ? : https://www.cs.cmu.edu/~rdriley/487/papers/Thompson_1984_ReflectionsonTrustingTrust.pdf

It’s the first reference cited…

I discovered the iPhone 6 is used as Reverse dns by hackers for websites and forums pédophiles. its not a legend it’s real

FWIW, this “novel attack” was known for 5 years at least, and I’m certain I wasn’t the one who discovered it, rather just made a repro in Go: https://github.com/golang/go/issues/20209

“The bad news is that there were no defenses to it, and now that people know about it they might start exploiting it.” Has the paper been translated into Russian or Chinese? If not, it will be. Soon.

Doesn’t that assume they can’t read English?

lol, if you arm chair security experts think russians can’t read english then Merica really might be screwed.

they have a term call maskirovannoye.. they can code and write comments in chinese to make it look like someone else.. whether that be north am, south am, europe, asia, language typing..get the point.

in the 80’s there was evidence of deep foot printing by them in many gooberment computers and had a hack for when you press control, alt, delete to sub in a pass catcher…

read some past sec books.. its all there..

Where was the embargo of detailed release as compiler and dev tool builders update and offer fix here? This reads as if there was zero observation of this established practice and these researchers are grabbing the notoriety brass ring here?

“We met a variety of responses ranging from patching commitments and bug bounties to quick dismissal and references to legal policies,” the researchers wrote. “Of the nineteen software suppliers with whom we engaged, seven used an outsourced platform for receiving vulnerability disclosures, six had dedicated web portals for vulnerability disclosures, four accepted disclosures via PGP-encrypted email, and two accepted disclosures only via non-PGP email. They all confirmed receipt of our disclosure, and ultimately nine of them committed to releasing a patch.”

Did you read the article?

“The latter half of the Cambridge paper is a fascinating case study on the complexities of orchestrating vulnerability disclosure with so many affected programming languages and software firms. The researchers said they offered a 99-day embargo period following their initial disclosure to allow affected products to be repaired with software updates…”

You youngsters! There’s a simple solution to this problem. Everyone throw out their monitors. Replace them with teletypes, like we used back in the good old days. Overprinting will then be obvious. An ancillary benefit will be the extension of ASCII art to Unicode art.

Yup, I don’t think Linux kernel is at any risk at all. Too many people reading mail and using git from a shell.

If you want to attack a core component of the internet, try systemd instead. Those punks use GUIs.

I’m pretty sure you’re being facetious, but in case you’re not — be aware that your shell and associated tools are most likely using UTF8, i.e., Unicode, too. I just recently encountered the reality of this when I accidentally misconfigured some locale settings and suddenly had ‘less’ and ‘vi’ showing me weird characters in text files, etc., etc. You cannot hide… well, you *can*, but it’s harder than you might think.

snake plissken was right

It shouldn’t be too hard to trap bidi overrides for examination in new code. The problem is devising a fix that doesn’t break a lot of legitimate code that’s already in use.

yetanotherknowitall is surely right: Search for bidi overrides for manual examination in code. If they are in a comment as opposed to a text parser, it’s difficult for the submitter to claim innocence.

I had a failure when trying to reproduce this experiment.

According to the section VI. EVALUATION, A. Experimental Setup

> Each proof of concept is a program with source code

> that, when rendered, displays logic indicating that the program

> should have no output; however, the compiled version of each

> program outputs the text ‘You are an admin.’

The paper fails to describe the procedure used to obtain the renderings, which may lead to me using a wrong setup for my replication study.

My experimental setup was as following: Standard Visual Studio Code install, without additional plugins. The code was cloned from the official github repository and the directory opened in VSC. Option not to trust the origin of the source was selected to disable advanced parsing of the source. Each source file was selected from

In each case, the code was rendered with syntax highlighted. Each rendering clearly indicated the comment. The RIGHT-TO-LEFT and LEFT-TO-RIGHT rendering of text was done correctly. Syntax highlighting was also done correctly clearly indicating the logic behind the code. Even more, a closing comment characters being rendered inside of a comment block really caught eye and clearly indicated that there is something wrong with the code.

In case of glyph confusion: hovering the mouse pointer or clicking on the function call (which is standard procedure during code review) clearly rendered highlight over the correct function making visual confusion of renderings impossible.

To conclude, none of the claims that rendering done by Visual Studio hid the real logic behind the code could be replicated.

It’s baffling that this issue wasn’t spotted during peer review process.

I suspect many PRs are taken without pushing them through an IDE, e.g. on sites like gitlab and github, or in repository tools.

There are some people who bother to sync to a branch and open it in IDE, but those people are much fewer than those who use some sort of web/diff tool to review code.

Generally speaking, most people don’t have a habit of hovering a mouse over code to search for misrepresentation of the code.

But sure, if you pay very careful scrutiny, you will find problems.

Another way to find problems is to compile the code, then look at a disassembly and see what it is actually doing. There are probably a couple hundred programmers in the world who regularly do this sort of thing – and usually they’re more concerned with performance and programming language research, not necessarily security auditing.

So yeah. It is possible to detect. It can probably slip by many people’s typical trust filters, because they think that auditing the code is sufficient, and they think that the code they are auditing in presumably “plain textual” tools is representative of what will actually execute.

Of course, if you simply filter for “are there bidi markers in this code?” that’s probably enough to understand that you need to be more careful. Or if all your tools and editors have a “strip all comments” mode, that will also help.

I agree, any program doing syntax highlighting is going to obey the actual order of the characters just like the compiler. Bidi source code will display with obvious incorrect syntax highlighting.

I am working in Hebrew, right to left, mixed with English and numeric data left to right. We use LTR and RTL all the time. Even now the Unicode implementation in many environments has bugs. When messing with the compiler to stop/filter out RTL and LTR characters in displayed strings probably zillions of bugs in apps in Hebrew and Arabic will show up. Having markers in IDE makes sense.

Does this realistically affect any projects that aren’t already in unicode?

I accidentally made a source file unicode. It compiled fine, but the PR was “changes in binary file”.

Soon to be implemented? Tiered levels of code reviews. The most extensive and time consuming development, testing, reviews, and final code corrections will be offered to those organizations willing to pay more for code that has the highest level of review and greatest security to avoid the expense of being hacked. Also expect fake “highest level” code to be marketed because product reviews will be extremely expensive.

From the description, it sounds like a “strip all comments” feature would be enough to just do a normal audit of the code.

Probably not costly to implement, and reasonable enough to demand not be put behind a tiered support feature.

At the very least, free tools will probably be available (may already be available) if you need to do this without paying for an upgrade.

The internet has become a dumpster fire. We need to get rid of it.

I’ve been on websites that contain the Arabic language and everything looks backwards to me with the right to left.

What did they discover? These bugs have been known for like 20 years. Even the eclipse site has one from 10+ years ago

https://bugs.eclipse.org/bugs/show_bug.cgi?id=339146

It’s not so much discovered as widely and publicly reported that matters. That bug report sat there for a solid eight years before being CLOSED WONTFIX, which alongside CLOSED INVALID is an all-too-common response to things like this. Now that the issue has been given some publicity, they’ll finally be forced to fix it.

Make me think any open source project code base is suspect. I don’t thing the average script kiddie will exploit this, but Nobelium/Cozy Bear prolly will.

Another headache…

A similar issue is the zero-width-space. (zws.im). It’s possible to create links that appear different than what they actually seem. Thanks Unicode for continuing to make me want to use a hex editor for everything. Seriously. This would NOT be an issue if it were limited, rather than adding new characters, new emoji, new languages etc. Terry Davis was unironically right in limiting his OS to 8-bit ASCII.

With C# instead of comments like this:

// comment

you could use this:

/* comment */

You can do this even without Unicode tricks. Years ago, with 8-bit character sets, I messed with a login-check routine that had two variables allow_login, one with a Cyrillic ‘o’, and carefully checked and set one while at one point allowing login based on the other. If you jumped through a few other hoops to pass some checks (couldn’t just allow anyone in) you could get in and bypass the password check.

can you please provide a simple poc?

1. It seems that JetBrains products (e.g. IntelliJ) actually render these characters (they display “LRI”, “PDI”, etc. in a box where these characters appear). It’s actually hard to miss.

2. It should be possible to find occurrences in projects by doing this regex search in all files: [\u202a-\u202e\u2066-\u2069]

2.1. I tested this in IntelliJ and it works.

Then before install packages updates of “official repos” must check the file container with virustotal homepage? The sha-256 became security risk?

https://research.swtch.com/trojan

Overblown much? Chance that this has already been exploited in private codebases: infinitesimal. Chance that this has already been exploited in public repos: small. No defenses? Maybe in the past. Now it’ll be built in to public hosting sites. I know you’ve got to write articles … but … big deal.

It’s a big deal in that it’s still an ongoing deal that hasn’t been undealt.

“Now it’ll be built in to public hosting sites.” – Eventually maybe.

That’s overstating it, much more overstating it than Brian might have,

which remains to be seen by your own tacit admission.

“Chance that this has already been exploited in private codebases: infinitesimal.”

-Is not a fact that you can just chuck into your imaginary fire. Prove that.

Demonstrate that it’s true since you’re going to claim it so aggressively,

as to pretend this is completely a non-issue nothingburger decades on.

No defense? BS

It should not be very hard to write a simple program(in C?,) that simply opens a file in binary mode and looks for those control charactesr and if it spots them, it kicks out a warning listing the line number where it found them.

This could be done as part of a source code control system or as a defensive run over a current source repository.

Since your opening the file as a BINARY, it’s not going to care one bit if encounters ANY type of Unicode control sequence(s), it’s just a sequence of bytes as far as it is concerned.

The sky is NOT falling! LMAO

regarding ‘Trojan Source’ Attack Abuses Unicode to Inject Vulnerabilities Into Code’ article:

quinn michaels and securityprime exposed this vulnerability 11 months ago, exactly on december 11, 2020. quinn at quinnmichaels.com, #quinnmichaels youtube.

quinn michaels was kidnapped as a baby and for years has reached out for assistance from agencies and individuals.

because cambridge university disclosed this threat, everyone will now accept this as truth. because quinn michaels disclosed this almost a year ago to the public, no one gives him a thought, nor takes him seriously when he attempts daily to expose and share important truths relating to AI safety and security of all. not even one computer programmer reached out to him that i am aware. the cyber vulnerabilities affect everyone on the internet, the safely and security of citizens, institutions worldwide. quinn found his information in a hacker file and no one that watched and learned about this ever alerted the public, U.S. agencies and corporations.

quinnmichaels.com, twitter quinnmichaels #quinnmichaels, youtube.

quinn michaels was kidnapped as a baby and has reached for years asking legal agencies for support. for years he has ask agents, people who watch his videos to share his twitter, youtube account posts and videos, private and corporate alike.

from what i understand, these attacks are happening because no one public, private, agencies will find out those behind his kidnapping.

not even cyber security found quinn’s post to alert nor warn, to protect the people, governments, private or public individuals?

why is your site and cambridge university just now releasing this information to the public when it was publicly known close to a year ago?

This reminds me of C-64 days. You could put in characters which would back-up the cursor when you listed code. We used to use that to put back-doors into BBS software that we distributed.

If your backup was too long, you could kind of see it while listing the code… so you’d keep it shot. Define a small global variable for a backdoor password. If you do something small which simply gives access no one would ever see it. The same sort of thing could be done here if you know what to define.

Governments, beginning with the American one, have been exploiting this since it was purposely built into Unicode so they could – I surmised years ago – stop relying on reparsing machine code. Frankly, it’s stunning the security community didn’t notice this about Unicode a long, long time ago. I got tired of being relentlessly attacked (and tired of corporate complicity with making machines and networks indefensible) and stopped doing security research ten years ago, but this was old at that time.

Unicode is not the only game in town working along roughly similar lines. We have a de facto security-sabotage language generally written to be readable directly from the hex representations of the major machine codes, of which the Unicode representation is apparently a subset (I assume the corruption of Unicode continued to be built out over time, as the older systems were expanded, but there is too much evil to wrap into Unicode). If researchers apply the same conceptual framework – bizarrely little creative thought required – they’ll find still-deeper compromises across computing.

The field needs a complete (ground-up, infrastructural-type complete) rebuilt – but finding honest programmers and QA people will be very hard indeed.

don’t be silly now… unicode is an attempt to standardize things and it’s complicated to do that… and to get everyone to agree on things. There’s going to be exploits, but they’re not created on purpose. There’s a lot of members in the unicode consortium and really the guv’ment has better things to do than to try to slip exploits in to standards like that. As for “de facto security-sabotage language”. ummm… what?! Are you talking about reading strings from a hex-editor?

This article uses the term “Bidi Override” throughout, but this should be replaced (almost) throughout with a term such as “Bidi controls” or “Bidi control characters”. The official term for these characters is “Directional Formatting Characters” (see https://www.unicode.org/reports/tr9/#Directional_Formatting_Characters).

The term “Bidi Override” is usually reserved for two characters only:

LRO, U+202D, Left-to-Right Override, and

RLO, U+202E, Right-to-Left Override (see Table 1 of the Boucher/Anderson paper), the associated phenomenon of a “hard” override (i.e. affecting all characters including e.g. the Latin alphabet), and mechanisms in other technology that achieve the same (e.g. the HTML element (https://html.spec.whatwg.org/#the-bdo-element) or the ‘bidi-override’ value of the unicode-bidi property in CSS (https://www.w3.org/TR/CSS2/visuren.html#propdef-unicode-bidi)).

The Boucher/Anderson paper itself also uses this term wrongly in quite many instances.

Bidi overrides (in their correct definition) are just one of the possibilities for this exploit. The use of the term “Bidi override” let me at first suspect that this vulnerability only affects two Unicode characters, but that’s unfortunately not true.