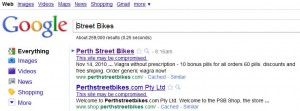

Google has added a new security feature to its search engine that promises to increase the number of Web page results that are flagged as potentially having been compromised by hackers.

The move is an expansion of a program Google has had in place for years, which appends a “This site may harm your computer” link in search results for sites that Google has determined are hosting malicious software. The new notation – a warning that reads “This site may be compromised” – is designed to include pages that may not be malicious but which indicate that the site might not be completely under the control of the legitimate site owner — such as when spammers inject invisible links or redirects to pharmacy Web sites.

The move is an expansion of a program Google has had in place for years, which appends a “This site may harm your computer” link in search results for sites that Google has determined are hosting malicious software. The new notation – a warning that reads “This site may be compromised” – is designed to include pages that may not be malicious but which indicate that the site might not be completely under the control of the legitimate site owner — such as when spammers inject invisible links or redirects to pharmacy Web sites.

Google also will be singling out sites that have had pages quietly added by phishers. While spam usually is routed through hacked personal computers, phishing Web pages most often are added to hacked, legitimate sites: The Anti-Phishing Working Group, an industry consortium, estimates that between 75 and 80 percent of phishing sites are legitimate sites that have been hacked and seeded with phishing kits designed to mimic established e-commerce and banking sites.

It will be interesting to see if Google can speed up the process of re-vetting sites that were flagged as compromised, once they have been cleaned up by the site owners. In years past, many people who have had their sites flagged by Google for malware infections have complained that the search results warnings persist for weeks after sites have been scrubbed.

Denis Sinegubko, founder and developer at Unmask Parasites, said Google has a lot of room for improvement on this front.

“They know about it, and probably work internally on the improvements but they don’t disclose such info,” Sinegubko said. “This process is tricky. In some cases it may be very fast. But in others it may take unreasonably long. It uses the same form for reconsideration requests, but [Google says] it should be faster…less than two weeks for normal reconsideration requests.”

But Maxim Weinstein, executive director of StopBadware, an independent non-profit anti-malware organization, said if Google delays de-listing a flagged site, it is usually because the site’s owner hasn’t fully eliminated the problem that caused the alert, or that site owner has skipped a step in Google’s reconsideration process.

“If someone doesn’t know to request a review, it can be a while before Google’s system will on its own rescan the site and remove the warning,” Weinstein said.

Google says it will be rolling out the new system slowly. As a result, not all of the sites that deserve to be flagged as compromised are listed that way yet.

“For example, 90 percent of search results for this search should be labeled as ‘compromised,’ but I don’t see any warnings,” Sinegubko said.

Web site administrators who find their pages flagged with “this site may harm your computer” warnings can get relatively speedy assistance at Badwarebusters.org, which maintains a fairly active and responsive help forum. Google also has a Webmaster Help Forum that includes a malware and hacked sites section, which already contains a few interesting threads about this new warning system. In one thread, John Mueller, a Webmaster trends analyst with Google Zurich, sheds a bit more light on the alert and cleanup process.

“As mentioned by the others, this is triggered when we determine that your site has likely been compromised by an unauthorized third party. Once it’s shown that this is possible, it’s hard to predict what else may have been modified. For instance, it might be that in addition to hidden links, someone has changed the phone number or is redirecting orders to the wrong website — everything is possible once third parties are able to modify a website.”

“Once you’ve reverted the compromise and – hopefully – taken steps to prevent this from happening again, you can submit a normal reconsideration request through Webmaster Tools. These requests are processed fairly quickly (usually within a day, though it’s not possible to give an exact timeframe).”

Great article, once again, Brian!

I imagine it is probably harder to get Web of Trust or McAfee’s Site Adviser to clean up their lists. I hear it takes even longer to get taken off Site Adviser’s bad listings!

As usual, I really appreciate your work! 🙂

@JCitizen I have been told that notifying this email address does the job http://www.securecomputing.com/index.cfm?skey=297#w6

Thank you indaknows; this will be valuable for web-masters everywhere! Thanks!

Thank you Brian.

I know that I can ALWAYS rely on your blog for up-to-date and relevant material.

Seasons Greetings to You, Yours & Readers

WebofTrust can be fast if you go through the forums, since there is no authority other than other users creating the ratings. But some of the reviewers are very tough as far as requiring a privacy policy and expecting SSL for any site collecting any personal information — even a contact form that allows you to provide a name and email address, even though any email response that might be sent to that address would reveal the same information anyway.

SiteAdvisor is extremely slow to re-review a site. The volunteer reviewers can post entries, but they have no effect on the color code that shows up when you visit a site. That slowness cuts both ways, as someone can post innocuous content on a new website until McAfee provides a green rating, then switch to some fraudulent activity and enjoy months of McAfee’s neglect.

Search results for “buy a windows 7 key” purpose could only be to facilitate using pirated software.

Since ordinary people have been sued for hundreds of thousands of dollars for illegally downloading copyrighted music on a p-to-p protocol, why is it okay for Google to allow search results for a similar purpose — cheating copyright protections?

Why is Microsoft so unconcerned about Google’s complicity in this criminal activity?

Are all these sites phishing sites or honey sites to catch those who use illegal Windows software?

More like a PC response to an “Open Net.” But don’t we censor kiddie porn sites? Google is not helpful.

Why is it Google’s job to develop natural language processing and use it to police individuals’ searches?

OK. I’ll ask the “silly” question:

– If they know the search find is compromised/harmful/malicious, why show it at all?

.

@PC.Tech:

Because there have been suits over this; and the search engine companies didn’t always win. You have to prove it first. If Google, Bing, and Yahoo! tried to block every site they “thought” was illegitimate, they would go out of business very soon; just from the expense of chasing the wack-a-mole!

Links which are directly infected are removed as soon as they discover them; also because of liability risk; or at least that is what I’ve read in the forums for the last two years.

@PC Tech

I think you make a completely valid point, Google always argue that their results are ‘opinion’ and therefore protected speech in the First Amendment sense. Court cases in the U.S. (SearchKing and Kinderstart) have ruled that way.

If Google don’t have a legal obligation to show any sites; they’re definitely under no obligation to show compromised sites.

But if they just left them out nobody would get to appreciate how very clever they are!

Because these systems often get it wrong. One of my sites was flagged by MS’s smartscreen and it was just an error on their end of things.

I use Google searches a lot but I don’t remember to see the notification “This site may harm your computer” next to the search result links in the last 18 months, maybe even more. A very likely explanation was reported by Brian a little while ago: Many exploit kits are “trained” to detect when the site is scanned by Google bots (and bots from other similar services) and not deliver in such case their malware load in order not to be flagged by Google and raise alarm with the legitimate site owners. This strategy seems effective and I don’t see why the mavericks will not attempt to use it against “This site might be compromised.” flag as well. Let’s hope Google have some improvements to prevent those dirty tricks from working.

Hey, George,

I get the warnings all the time – maybe you’re just not visiting the *good* websites.

It seems, that this feature is not available in every country ( I am from Germany).

Unfortunately, DNSBL and spamhaus still have existence.

These “index solutions” were once though to “work their way out of existence”, not become necessary & de facto.

DNSBL was from 1997. In a few days it will be 2012.

That will be 15 years of “filtering” bad things in the internet space yet the problems do not get simpler, nor go away. Actually its quite the contrary.

Is there any APIs offered by Google & kin that I could hook up to my IT departments tool set already in place that would let me know when Google or anyone else stumbled across something fishy.

I already scan my sites, but any extra eyes would be appreciated. They crawl us relentlessly, it seems a natural symbiotic relationship.

One bad page, one bad week, and We could be listed on the index?

It seems we’ve slid and even automated the Index Librorum Prohibitorum which is decided by whom?

While today we are dealing with malware, in our tomorrows we are going to be dealing with falsified information meant to mislead consumers, politicians, etc.

Im speaking of “altered” stock quotes, advertised prices, “facts”, that all look like they are coming from legit sites but are “malware” used to shape opinion/action of the uninformed consumers.

Its already bad, its going to get worse.

Whats going to help my GPS + map app in my phone know that its being “steered” to guide me to shops that payed to whack the competition off the results by SEO and other manipulations of internet traffic and indexes.

Re: delays by Google in removing sites fr/ “Contaminated” lists

1) The websites have an obligation, if they’re inviting users to view the websites, to at least attempt to keep them clean. By the time Google classifies them as contaminated, that’s a “Fail.”

2) Given that the websites have a history of contamination, it behooves Google to monitor them for some period of time after scrubbing to make sure the websites STAY scrubbed.

3) I, for one, appreciate Google’s caution.

I appreciated Kreb’s commentary on Google’s poor performance on reviewing sites that have been cleaned.

Despite best intentions, websites do get contaminated. Webmasters and security folk clean it up, but then suffer longer than necessary when their site remains tagged as “bad”. Google has much room for reimprovement. For starters, a system that reviews a site within minutes of an authenticated request (if increasing backoffs for re-reviewing is required to manage load, that’s reasonable), and upon failure, listing what URL and content is bad. If the site owner believes its a false positive, there should be a process to have it manually reviewed (if that would require a modest fee, that’s fine) within an hour. All of this should be conducted within an incident handling system that tracks the issues from beginning to end.

From everything I have seen in many technical discussions; some of the problems are web-masters that are not following standards and practices.

Now I realize the crackers have very advanced ways; but there is always two sides of the story here! I lean toward the web-master’s conundrum, and always report problems to them. They seem very appreciative, and react to this situation very positively. I’m on their side, believe me; but if I were in the same situation, I’d be very embarrassed at the realization my servers were pwned in any way! I guess that is just my junk yard dog mentality! ]:)

Interesting. I have been using McAfee’s SiteAdvisor plugin for Firefox to do the same thing for a while now. Very glad to see the search index taking some initiative, though!