Details about the recent cyber attacks against security firm RSA suggest the assailants may have been taunting the industry giant and the United States while they were stealing secrets from a company whose technology is used to secure many banks and government agencies.

Earlier this month, RSA disclosed that “an extremely sophisticated cyber attack” targeting its business unit “resulted in certain information being extracted from RSA’s systems that relates to RSA’s SecurID two-factor authentication products.” The company was careful to caution that while data gleaned did not enable a successful direct attack on any of its SecurID customers, the information “could potentially be used to reduce the effectiveness of a current two-factor authentication implementation as part of a broader attack.”

Earlier this month, RSA disclosed that “an extremely sophisticated cyber attack” targeting its business unit “resulted in certain information being extracted from RSA’s systems that relates to RSA’s SecurID two-factor authentication products.” The company was careful to caution that while data gleaned did not enable a successful direct attack on any of its SecurID customers, the information “could potentially be used to reduce the effectiveness of a current two-factor authentication implementation as part of a broader attack.”

That disclosure seems to have only fanned the flames of speculation swirling around this story, and a number of bloggers and pundits have sketched out scenarios of what might have happened. Yet, until now, very little data about the attack itself has been made public.

Earlier today, I had a chance to review an unclassified document from the U.S. Computer Emergency Readiness Team (US-CERT), which includes a tiny bit of attack data: A list of domains that were used in the intrusion at RSA.

Some of the domain names on that list suggest that the attackers had (or wanted to appear to have) contempt for the United States. Among the domains used in the attack (extra spacing is intentional in the links below, which should be considered hostile):

www usgoodluck .com

obama .servehttp .com

prc .dynamiclink .ddns .us

Note that the last domain listed includes the abbreviation “PRC,” which could be a clever feint, or it could be Chinese attackers rubbing our noses in it, as if to say, “Yes, it was the People’s Republic of China that attacked you: What are you going to do about it?”

Most of the domains trace back to so-called dynamic DNS providers, usually free services that allow users to have Web sites hosted on servers that frequently change their Internet addresses. This type of service is useful for people who want to host a Web site on a home-based Internet address that may change from time to time, because dynamic DNS services can be used to easily map the domain name to the user’s new Internet address whenever it happens to change.

Unfortunately, these dynamic DNS providers are extremely popular in the attacker community, because they allow bad guys to keep their malware and scam sites up even when researchers mange to track the attacking IP address and convince the ISP responsible for that address to disconnect the malefactor. In such cases, dynamic DNS allows the owner of the attacking domain to simply re-route the attack site to another Internet address that he controls.

Sam Norris, founder of ChangeIP.com, the dynamic DNS provider responsible for many of the root domains on the US-CERT’s list, said he terminated all of the accounts on the list as soon as US-CERT published the list on March 18 (although that version of the list does not mention the RSA connection). Norris soon was contacted via email by the account holder who used the prc. dynamiclink. ddns. us domain. Norris said the account holder wanted to know the reason his domain was killed.

“This guy has been emailing me, asking me for the account back, saying things like ‘Hey, I had important stuff on that domain, and I need to get it back,'” Norris said. “The bad guys are definitely interested in getting it back, which means we probably cut off their communications or made it so that they couldn’t clean up their trail afterward.”

Much of the public speculation about the attack on RSA so far has invoked the term “advanced persistent threat” or APT, which is security industry shorthand for “We’re pretty sure it came from China.” At least as far as the domains that were routed through ChangeIP.com are concerned, that assessment appears to hold up (with the usual caveat that attackers can route their traffic through machines anywhere in the world in a bid to disguise their true location).

“Ninety nine percent of the time, when these guys logged in to one of their accounts to change the IP address for a domain, they were coming from a Chinese address,” Norris said.

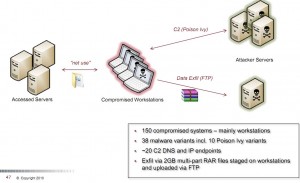

A closer look at some of the domains also indicates the use of some familiar attack tools that have been associated with previous targeted attacks attributed to Chinese, state-sponsored hackers. For example, one of the few domains on the list not attached to a dynamic DNS service — mincesur .com — has been a well-known download source for “Poison Ivy,” a lightweight attack tool that attackers have used quite a bit in previous pinprick attacks (PDF) to remotely administer hacked systems and to hoover up information from those machines.

Interesting as these tidbits of data may be, they don’t answer the questions that seem to be on everyone’s minds about the RSA attack: How much information did the attackers get, and can organizations still trust SecurID tokens as an authentication mechanism? A spokesman for RSA said the company wasn’t yet ready to publicly disclose more details about the attack. Several sources say RSA recently briefed a small group of industry leaders and customers, providing further information about the attack, but those folks had to sign a non-disclosure agreement barring them from discussing the details.

Since RSA’s initial disclosure, I’ve received many emails from readers asking for my take on the attack. I’ve avoided writing about it because I didn’t have much to add to the initial reporting, which remains very speculative in the absence of more details from RSA. And as I read back over what I’ve written above, I can see this that post seems speculative as well. As for RSA’s technology, I have noted in one story after another that one-time tokens such as those generated by RSA’s SecurID key fobs are better than mere passwords for authentication, but not by much. Today’s attack tools allow the bad guys to control not only the victim’s PC, but also what the victims see in their Web browser. I have written about a number of successful attacks in which the crooks got the information they needed to defeat tokens and empty bank accounts by injecting content into the victim’s browser. The latest attack on RSA serves to increase suspicion, even if unfounded, that its products may not provide sufficient protection to the user.

The Operation Starlight has uncovered the specific malware used in the attack on RSA and has profiled the cne operators as the “entrenched” meaning they will have to fight tooth and nail for their network back. The attacks malware

The Operation Starlight has uncovered the specific malware used in the attack on RSA and has profiled the cne operators as the “entrenched” meaning they will have to fight tooth and nail for their network back. The attackers malware has been used in several other serious intrusions of US organizations. Operation Starlight has also profiled the time the group has maintained undetected presence in RSA which goes back to middle of last year. If you Truly want to know the real deal and are tired of the criminal comments you need to understand the assault on western nations by the Chinese government. Get up and do something about it. Learn at http://WWW.conanthedestroyer.net

Where can I read more about Operation Starlight. Saw mention of it on your blog but no links or more information.

This may be a little naive of me (and maybe more than a little), but could all of this mean that it’s time to give up on the fantasy that we can just pay somebody some money, install their software and hardware, follow their instructions and we will be safe on the internet? That maybe instead, to maximize our safety, we have to take some personal responsibility about how we conduct ourselves on the internet and perform some (icky) regular PC monitoring and maintenance?

Personally, I’ve been running unpatched installations of XP w/SP1 or SP2, since about 2003 or so (when I started paying attention to ways hackers could compromise my PCs), and I can count on one hand the number of times malware has run on any of them. And in every case that I can recall, I spotted and completely removed it within 1 day of its appearance. I occasionally read similar experiences among the commenters on Darknet, so I don’t think I have any freakish or superhuman capabilities. I’m just careful what I click on, open new browser pages when I access financial and sensitive websites, close them when I’m done, use a good firewall (ZoneAlarm) that requires programs to ask for internet access before they get it, keep an eye on what processes are currently running, use good cookie control (including the notorious Flash cookies) – nothing difficult or requiring any great expertise. More along the lines of what it used to be like to own and operate a carbureated car – everybody did it as a normal thing in life, not the hair-raising, unbearably difficult thing that people today seem to think it was.

Again, perhaps I’m naive, but I’ve thought for some time that most security software and services today are simply unnecessary and inherently ineffective. Does that mean we just keel over and let the bad guys do whatever they want to? I don’t think so. The “real world” is full of bad guys too, and the best protection for that is to be careful and use common sense when it comes to your money, your person and your sensitive information. (Yeah, sure. I’ve only been reading this blog for a short time and have seen some of the tricks they can pull, like the ATM scanners. But forewarned is forearmed, yes?)

Deborah: Your description of your computer use seems more reckless than “naive.”

You never patch your XP software? The simplest protection of all and you don’t use it? Yes, you “have to take some personal responsibility … on the internet and perform some (icky) regular PC monitoring and maintenance.” Start by reading some of Brian’s archived “Time to Patch” articles, if you don’t know why it’s important to update MS products.

Even the safest-looking sites can be compromised. That’s why restricting your list of sites isn’t enough. Obviously, if you’ve had even five or less instances of malware that you’ve discovered as reaching your computers and you had to remove them, then you’re not protected to the extent that you could be, even if software security programs are less than perfect. And you can’t and don’t know if you have undiscovered malware, such as bots, if you aren’t using a good program to run scans on your computers regularly. AV programs aren’t perfect, but their scans do find things that you can’t see!

I could go on and on, but …..

It will be interesting to see if the risk to RSA software tokens on Windows is greater than the hardware tokens. The username, password, and PIN can be keylogged for sure, but can the soft token serial number be read directly from a Windows installation?

I don’t suppose IPv6 will make the need for dynamic addressing go away anytime soon, if ever.

@ JBV

I understand your logic, really I do. Having read extensively about threats on the internet, watching my PC’s behavior and thinking about the whole mess.

The problem I have with MS patches is that they goober up a whole lot more than they fix – in my (perhaps not so humble )opinion. I was a software tester at MS for 5 years, in Windows NT and XP, and I know first hand what goes into the design and testing of those patches, and more importantly, what doesn’t go into them. The thoroughness of the testing of the original operating systems is enough to make a grown person cry buckets of tears – I once went through the bug database after (an unnamed) software release was stamped “DONE” and kicked out the door. Something like a third of those bugs were resolved “Won’t Fix”, and reading through them to see just what it was that they wouldn’t fix would make your hair stand on end. But for all that, the operating system is designed and tested much, much more thoroughly than any of those patches are. I’d rather take my chances with the bad guys and watch closely for any sign of them than goober up my systems with MS patches.

As for malware, my (very) simple response is: “How can they do any bad thing to you if they can’t establish an internet connection to their server, and can’t send any data back to it?” Sure, some viruses can attack your hardware, but nobody does that any more. They’re after your money and your secrets nowadays. And I guess if some of my hardware gets taken out once every 10 years, that’s a price I would pay for having clean running systems the rest of the time.

And I didn’t detail everything I do to monitor my network connection and observe system behavior to spot anomalies. None of it is hard, you just have to do it. Having super clean systems makes it very easy to do. And I really do question whether having less than 5 malware attacks in 8 years, none of which successfully caused any damage to me or my systems, or successfully accessed the internet to damage others, is not as protected as I need to be.

I know I hold a contrarian view, and it’s one that gives a lot of people the screaming willies these days, but do come back. I’m enjoying this conversation. Maybe you’ll convince me of the error of my ways. (But you won’t be the first who’s tried, and none before have succeeded… 😉

Once you have a rootkit installed on your system, you no longer know if you have a functioning firewall or not. The rootkit loads earlier in the boot process than the firewall, and can change its settings.

The fact that you aren’t getting popup ads or browser redirects doesn’t mean you have a clean system. Successful trojans don’t call attention to themselves or slow your system down. The fact that you were compromised 5 times means you’ll never again know if you have a clean system. I mean, really, that’s like saying you don’t have to use condoms because you’ve only caught HIV 5 times.

If you distrust Windows that much, why run it at all?

@ AlphaCentauri

“Once you have a rootkit installed on your system, you no longer know if you have a functioning firewall or not. The rootkit loads earlier in the boot process than the firewall, and can change its settings.”

You might be talking about the Windows Firewall, and that’s one reason why I don’t use it – you really don’t ever know if it’s working, or even exactly what it is or isn’t doing. Sure, it’s idiot proof. You’re just supposed to install it and assume that it’s working.

I like Zone Alarm (v. 6.5 – don’t know what more recent versions do), because you interact with it several times a day for just a second or so each time. You can set it to keep these notifications to a comfortable minimum, but it will always ask your permission for a new process that wants internet access. And that’s how I’ve caught the 3, maybe 4 malware attacks I’ve had in the last 8 years (zero in the last 4 or 5 years) – they try to access the internet and Zone Alarm stops them and asks me if I want them to. And I would know if Zone Alarm was compromised from a number of clues, the most obvious one being if it didn’t put up the normal notifications I’ve set up for processes, and a root kit designer would be very unlikely to probe to find out what all of them are at any given time. I suppose they could knock out Zone Alarm’s feature of monitoring for new processes, and I might not catch that for awhile, but I install new software fairly frequently, and if it didn’t put up notifications for those new processes I’d know something was wrong. So far that hasn’t happened, but in principle it could and I’d have to deal with it. And if that were a serious threat (which I don’t think it is), there are other steps I could take to watch for it.

“The fact that you aren’t getting popup ads or browser redirects doesn’t mean you have a clean system. Successful trojans don’t call attention to themselves or slow your system down. The fact that you were compromised 5 times means you’ll never again know if you have a clean system. I mean, really, that’s like saying you don’t have to use condoms because you’ve only caught HIV 5 times.”

Well, the most devious of successful Trojans still has to run in a process, even if it’s in RAM, but there are ways to easily keep an eye on that. And one of them is to not have an operating system with 60 bazzillion process running, so that you can’t tell at a glance what’s supposed to be there and what isn’t.

And – there’s a gigantic difference to being exposed to HIV 5 times and actually getting HIV 5 times. There’s such things as condoms, you know. (And whether having one malware running on your system for a short period of time is really the equivalent of HIV, that I don’t think so.)

“If you distrust Windows that much, why run it at all?”

I don’t distrust the original Windows XP, and I run it with glee. It’s the patches I distrust.

For the record, I do install AV scanners every now and then, just to see what the state of the art is and for sanity checks. They never find anything and I usually uninstall them about the 2nd or 3rd time I’m in the middle of a CPU intensive task and they start running their scans without so much as a Mother-May-I. I don’t need that.

If you were an insider, then you’d have more reason to patch than most. Microsoft’s security strategy, as told by Steve Lipner, is to ship above all other things, then handle remaining issues later. They also have a strong testing process for patches. They didn’t really start employing their Secure Development Lifecycle until Vista development, meaning you aren’t benefiting from any “tear-inducing” or “rigorous” development process. The number of security flaws in recent Windows versions vs previous version easily refutes your claim that you’re safer on an unpatched, older Windows. Hackers have proven to be more dangerous than patches every time and many huge botnets formed so easily due to large numbers of (sighs) unpatched, pre-SP3 WinXP systems. If people like you would upgrade & patch, we’d all be safer.

As for watching what you surf, this does improve your security profile. However, this assumes two things: 1) you can tell a good site from a bad one; 2) attackers can’t use good sites. The first is dubious because modern black hats are very sophisticated at deploying clone sites, fake ad sites, etc. Without comparing certificates, it can be nearly impossible to tell that a site is a duplicate until you start getting dubious charges or emails.

The second issue is becoming more of a problem now. Crooks can use vulnerabilities in legitimate sites to subvert your system. Cross-site scripting attacks are among the more prevalent. A recent trend is using innoculous ads on “good” sites to do evil things, from redirects to their sites to direct attacks on your machine. Sandboxie is a nice product, but can’t prevent all types of attacks, especially those that work within the browser like XSS.

So, you’re better off patching. Best option is to switch to a mainstream form of Linux, use Firefox w/ addons I’ve mentioned, and update whenever requested. I virtually *never* have problems due to updates on Windows or Linux in a non-commercial setting. If you must stay on Windows for web surfing, then you should upgrade to Windows 7, turn on security features, turn off unnecessary services, use Firefox w/ the addons, and let your system Automatically Update itself for critical updates. I can’t remember any friends or family complaining in past six months that an update fried their system. I can remember many people asking how to get a virus off their un-updated system.

So, for all of us, please update your system. I’m not a big fan of spam and DDOS attacks that system like yours are likely to provide.

@JBV

Sorry, I did go back and read some of Brian’s “Time to Patch” articles. We were (I was) originally talking about MS operating system patches, but applications can be a problem too. My simpleminded solution is to just not use most of them. There are a lot of open source alternatives available that the hackers don’t target, and many of them have as nice or nicer features than the big box (big name) applications. And for internet enabled applications, like browsers, where you just about have to install Flash, Java, and allow Javascript – run ’em in a 3rd party sandbox. I like Sandboxie, and have had zero problems with it.

I think you’re on the wrong forum …

DeborahS: The problem is you’re an individual who knows enough a) to do what you do, and b) doesn’t mind doing it. You simply aren’t the average user.

Also, your methodology does not scale to corporations.

I usually tell my clients that the number one thing they can do to avoid malware is stay patched, run a firewall, and run Firefox instead of IE. That alone reduces the attack surface massively. If all they do is that, most of them would be unaffected by malware. Running security software beyond that is mostly for those people who insist on clicking on everything they see on the Web.

I’ve read plenty of stories like yours over the years. Someone always claims “I don’t run any security software and I’ve never been hit.”

But then you have to explain why so many people who DO run security software get hit.

The fact that those who run security software do get hit doesn’t mean the software is useless. It just means the situation would be much worse if they didn’t run it.

In other words, cases like yours are anecdotal (read: worthless) evidence that security software is not needed. It’s that simple.

We in the security business always tout that end users need to learn more about computer security and be smarter about running their machines like you are.

But that’s like saying “everyone should come together and love one another.”

It ain’t gonna happen. End of story.

On Bruce Schneier’s blog, I’ve repeatedly said what I say here: “There is no security.” If someone wants what you have bad enough in economic or emotional terms, they’re going to get it. “Security” of any kind just keeps out the “riff-raff”.

The only “security” there is in life is: 1) Live so you don’t have enemies (good luck with that!), and 2) eliminate your enemies before they affect you.

In IT terms, either security is engineered into the infrastructure (OS, networks, etc.) – which in today’s software industry is next to impossible – or the end user has to deal with it himself. In either case, once again, what humans make, humans can bypass.

The worst thing anyone can do is believe they have “security”. It’s always proof they don’t.

@ Richard

Yes, we’ve had this discussion before on Schneier’s blog. I keep thinking you mean to say there’s no “perfect” security that offers protection against “all” threats. To say there is “no security” is somewhat foolish. The definition of security is ensuring certain attributes of one’s assets, such as confidentiality or integrity, are maintained in the face of a set of known or unknown threats and vulnerabilities. Each group chooses which threats and vulnerabilities it will counter.

From this view, which is the common view in IT Security, security certainly can be had. For instance, in web browsing I do a risk analysis that shows numerous classes of attack: plugin or addon-related; widespread or sophisticated; application attacks; XSS; MITM; information leaks. This is a decent list to start with. From that point, one develops countermeasures to these classes of attack wherever possible.

To start with, I’d use a hardened Linux base with MAC and NX support. For those who want more than AppArmor, there is a policy on the web to restrict Firefox via SELinux. Next, I use Firefox for obscurity, then try to defeat web-based attacks with plugins: Adblock Plus; NoScript; Flashblock; HTTPS Everywhere. Added protection would put this on a dedicated machine (e.g. netbook) that loaded via a LiveUSB stick, contents removed from RAM upon power-down. Another scheme is a SELinux or Xen enforced set of VM’s, with trusted and untrusted sites never used in the same VM. And, of course, the system operates behind a NAT-enabled router/firewall.

The resulting scheme let’s me surf the web with confidence that I probably won’t be infected any time soon. I’d say I have security against the threats I focus on. It would take a very targeted attack, along with my IP address, to breach my security. Even then, the breach would have minimal privileges and only last one session. They would have to repeatedly breach my security without me noticing and defeat the MAC scheme to become a persistent threat. How do I have no security? I’ve proven my security in practice by surfing extremely dangerous sites that infected other people without any problems.

So, a person can indeed have security against specific threats or vulnerabilities. A person can have security against entire classes of vulnerabilities with proper design choices and implementation. There is no perfect security, sure, but there is security for those willing to understand and pay for it. The more you want, the more it costs in time, effort and money. This is one reason “perfect” security is impossible. It’s good that I’m not looking for it: I can settle for immunity to 99% of malware, exploits and escalation attacks.

@ Richard Steven Hack

I wondered if someone would come back with that argument, that there are a lot of people who cruise the internet like it’s an all you can eat buffet, without a clue of what’s happening on their PC or the internet at large. And even though you can say that those people deserve whatever they get (and in my opinion they do), the fact remains that their computers are the ones enabling the botnets.

So yes, I agree that it’s good public policy to preach keeping updated on your patches and your security software, if for no other reason than to reach those people and help to minimize the harm that they unknowingly inflict on others.

But in a computer literate and security conscious community such as this one, do we really have to stick to the line that absolutely anybody who doesn’t do this is going to lose everything they own tomorrow?

Perhaps there is nothing to be done about the many individuals out on the internet (and off of it) who are not security conscious, at least nothing that’s guaranteed to be safe all the time, in every situation. And that was sort of my point originally.

I guess my take on Brian’s “one-time tokens such as those generated by RSA’s SecurID key fobs are better than mere passwords for authentication, but not by much” line was “of course they aren’t”. And by that I meant that it’s really not possible to be completely secure, no matter what you do. Somebody out there will figure out how to break it.

So I guess we’re in agreement on these points.

Don’t mean to be negative but I left a brand new PC connected to the internet alone for 2 hours while it was updating operating system patches and it got malware.

Running an unpatched system that is connected to the internet is not smart, no matter how many precautions you take.

I’ve got a science background, be warned.

“Don’t mean to be negative but I left a brand new PC connected to the internet alone for 2 hours while it was updating operating system patches and it got malware.”

Did you run the malware scanner /before/ starting the update?(The malware might already have existed).

Did you go to /any/ internet sites before running the update, and did they have adverts on them?

As we’ve heard recently in the news, third party adverts on reputable sites can be problematic.

Which software did you use to check for malware and was that downloaded from the internet, ( just checking you didn’t accidentally just go to an internet site between, see point above ).

What was the extent of the malware? I get told about all the cookies on my system when doing a scan, not exactly malicious.

The malware needs to have come from somewhere, which requires user interaction at some level, which just casts doubt over your entire counter argument.

I have to agree with you Dominic, that it is probably malware introduced to the computer by the user when they started the patching process. Or they could have gone to the wrong site and received a “patch.”

However, there are other possibilities that should be considered.

A) A fresh install on a computer at a static IP, attackers might have flagged that IP as being a rewarding place to attack. As the computer was unpatched and presumably unprotected by a proper firewall at that point, it could have been attacked.

B) An infected computer sharing the same network as the unprotected computer, leading to infection.

Most likely, it was still user error. If default settings weren’t tweaked to increase security… Namely that the user has to approve any other changes that happen. But if the user was attempting to speed up the patching process by trying to let it self-automate… Otherwise it could have been expoits used on an unpatched system and poor timing/luck to the user.

It doesn’t require user error. Windows XP with no updates can be compromised without the user doing anything other than connecting to the internet to download the updates.

http://www.sophos.com/pressoffice/news/articles/2003/08/va_blaster.html

http://www.sophos.com/pressoffice/news/articles/2004/05/va_sasserattack.html

I’ve had the same thing happen to me time and time again. I don’t care people say it doesn’t happen, it does. Autopatcher is a godsend, I highly recommend it.

I’ve setup many, many new systems without ever getting them compromised. The key: a HARDWARE FIREWALL!!!! 🙂

Of course it goes without saying that none of the other systems on my network have malware (worm) to spread internally. The key is to keep the nasties on the Internet side and nothing beats a hardware firewall for that purpose! Beyond that, malware pretty much relies on user interaction to get invited in.

Brian,

RSA will probably keep silent as long as they can. It’s only in their own interest: if the seed database has been hacked, that would mean big losses for RSA.

None of the RSA tokens can be upgraded, so they would all have to be replaced. With more than 20 million tokens in the wild, that would lead to severe losses for RSA.

Up until now, they’re only released enough information to statisfy the SEC. Unless they have other incetives, they will proably shut up, and hope this blows over.

Roland

Aother thing: the tokens contain a random number, a tine clock, and a serial number. All three are needed to calculate the 6-digiit code in the display.

If RSA stored the random number (highly likely) and the serial numer (likely), than a lot of curretn 2-factor authentication mechanisms are now 1.5-factor mechanisms instead.

I say 1.5, because in most implemantations you also need a 4-8 digit PIN code after typing in the token code.

If you look at the warnings from RSA to their customers, it looks like they’re basically saying: “watch out for social engineers eliciting PIN codes and guessing passwords”. That would only be a danger if the seed database has fallen in the wrong hands.

Roland

DeborahS: “But in a computer literate and security conscious community such as this one, do we really have to stick to the line that absolutely anybody who doesn’t do this is going to lose everything they own tomorrow?”

Well, as I indicated, I don’t take that line and I’m not sure who does. But the opposite line that security software is absolutely not needed as long as you’re paranoid about what processes you’re running is also not realistic.

For those people who don’t take precautions, there is a definite risk which is higher than zero and less than one which can be addressed to a sufficient degree by installing a good antivirus, a good antispyware and an adequate firewall. And since all of those are free for home users from most of the best suppliers, it’s a no-brainer to do so. And for the most part, when I was running Windows XP (I run Linux now and just grin about malware!) and for my clients who do so, this is sufficient to protect most systems that aren’t high-value targets.

The only hard part these days is constantly updating Adobe Flash, Adobe Reader and Java every week because of a new vulnerability.

But nothing will stop a properly phished email if people aren’t suspicious enough. It will always be possible to attack the mind behind the machine.

My suspicion is that eventually the wholesale malware distribution and botnet creation we see today will go away as hackers learn it’s more profitable to go after identity theft on the corporate level – the sort of thing Brian has been documenting for some time now. Rounding up a bunch of credentials for people with $300 credit limits really isn’t worth the effort compared to taking down a couple hundred grand from a corporation’s bank account in one transaction. There may always be the former, but if and when the average user gets wise(r), it may taper off somewhat.

Of course, then the game will shift to mobile phones as it already seems to be doing. There was a discussion on Bruce Schneier’s blog the other day about what happens when your phone holds your credit cards, your driver’s license, your medical records, etc. – and gets hacked or infected with malware. That’s a whole new ballgame. And no doubt the same mistakes on the software industry side and the user side will be made.

@ Richard,

First off the comments on Bruce Shneier’s blog you refer to originated from a paper by Ross J. Anderson over at the UK cambridge computer labs, posted on the http://www.lightbluetouchpaper.org site.

Second off with regards attacks on hierarchical authN/Z systems such as the RSA tokens or the more recent case of SSL certificates.

If you think about it irrespective of the amount of patching etc these organisations do the information they hold on their systems is of such value to attackers that they will find zero-day etc to exploit the systems to get at the information if the architecture of the organisations computers and communications alow it in any way.

Thus they need to have an architecture such that the information cannot be taken of of their systems by attackers, be they attacking across the network or physical entities in the organisations premises.

Such architectures systems policies and procedures are very expensive to implement and maintain and for various reasons don’t fit in with the majority of business models…

Thus too many “up stream” security orginisations have untrustworthy systems on which your “down stream” security rests upon.

This is because their business model is aimed at reducing cost to a minimum whilst also providing low overhead technical support. Whilst your security model is looking to protect significant assets that could be as you note in the 5 to 7 figure value.

That is the risk in the models is very very asymetric.

It is a situation that is going to get considerably worse in the future and will remain an issue as long as we have hierarchical trust systems where part of the hierarchie is outside of y/our control (as is the case with security tokens and CA’s).

Thus it is time we looked for nonhierarchical systems where the risk is either internal to y/our organisation entirely, or with an organisation that accepts a more balanced level of risk, and thus spends the money to either implement the appropriate architecture and/or suitable insurance cover (preferably both).

Agree with your point about risk distribution in the existing infrastructure and how architecture is a part of that.

Really, the only way to have any significant level of security in a networked system is…not to have a networked system.

OTOH, even in that case, with the apparent ease of physical penetration into a lot of these organizations with false identities or inside jobs, even that probably won’t completely ameliorate the problem.

So I would agree that organizations need to take more responsibility for their own security, both in terms of their internal architectures and the improvement of internal security in general.

Unfortunately, I suspect that at some irreducible level we will come up against the basic problems of business management being not terribly competent and thereby refusing to do or being unable to do what is necessary.

Which reduces us back to my “there is no security – suck it up.” Which doesn’t mean one abandons any attempt to have security, it simply means one has to expect and therefore plan for security breaches and their mitigation. Which is what Ross’ paper was about.

@ Clive Robertson

“Thus it is time we looked for nonhierarchical systems where the risk is either internal to y/our organisation entirely, or with an organisation that accepts a more balanced level of risk, and thus spends the money to either implement the appropriate architecture and/or suitable insurance cover (preferably both).”

I don’t know much of anything about corporate/organizational security, but these views of yours jive well with my philosophy in personal computing. At least it’s proven to be true for going on 8 years that the most effective strategy is to avoid being a target. When massive numbers of people use the same software and the same defenses, it only makes sense that those are the targets that are going to be hardest hit. So simply not using the popular software in the same ways that everyone else does fends off a lot of mayhem.

The analogous thing in corporate security, as I’m just beginning to understand it, is for a huge number of organizations to use the same security system and strategy. I quite agree that the hierarchical security systems with incompetence and/or negligence at the top are going to be inherently insecure, for exactly the reasons you give. But when you add to that a massive number of organizations, some of which are gold mines, using any given hierarchical system, well, it sure looks to me like a security disaster begging to happen. This RSA debacle may merely be a case in point.

As with so many things, a baseline of personal/internal control and diversity of strategies sure looks like a good answer. On both a personal and an organizational level, we make it so easy for the bad guys by all rushing down the internet highway in packs – all going the same way, all doing the same things. All they have to do is find one critical, unguarded vulnerability path, and milk it for all it’s worth.

@ Brian,

Eugene “Spaf” Spafford was interviewed by Federal News Radio over the RSA breach,

http://www.federalnewsradio.com/?nid=365&sid=2317326

His basic take is that similar has/is going on all the time due to the insuficiency of the majority of our comercial systems.

I quite agree that it’s only a matter of time before the major malware writers and company owners abandon all the small fry to go after much richer piggy banks. Although we’ll still see a lot of little bad guys chasing after whoever and whatever they can find.

I also agree that the game shift to mobile phones is already underway. I’ve never had one and would love to play around with Android, but I’ve seen enough scary stories about how easy it is for your mobile phone to be remotely accessed that I’m working on learning as much about that as I can. I won’t put anything more valuable than my phone number on one until I’m sure that it’s secure – or at least that I can see what’s going on and prevent access to anything truly valuable.

Anyone know where I can access an RSS feed for US-CERT’s EWINs?

Or any of these guys:

– Situational Awareness Reports (SAR)

– Federal Information Notices (FIN)

– Critical Infrastructure Information Notices (CIIN)

– Early Warning Information Notice (EWIN)

– Malware Initial Findings Report (MIFR)

– Malware Analysis Report (MAR)

– GFIRST Alerts

Other than via the GFIRST Portal, since I am only a US citizen?

As one who’s toured the RSA facility in Boston, where they load the securid fobs and have their primary SOC (staffed 24×7), I’m still confused where the data was lost from. The securid fobs, are presumably made in China, but loaded with their key and sealed up in Boston. It’s assumed that the keys, serial numbers and xml files live somewhere in the RSA campus in Boston, where they have no small amount of security measures and detection and response capability. Somebody must have really done something stupid architecturally, or the attackers must have been exceedingly clever.

Phish e-mail to a user?

Or maybe an inside job and/or accomplice?

What is the benefit of the anyone paying RSA mega $’s versus organizations texting message or e-mailing from their own internal servers on a secure pipe to their PDA/smart/ mobile phones an auto generated password for multi-factor authentication only when needed?

Poor choice by US-CERT to release the C2 servers to the general public.

@Bruiser: US-CERT did NOT release the list to the general public. Sh$@theads receiving those documents in trust, released them publicly.

Given that Brian only posted a screencap of the EWIN, one might surmise that one such sh@#head also supplied him with it.

Good call. I hope that’s the last bit of sensitive information US-CERT provides to the Financial and Banking Information Infrastructure Committee and any other groups who are too reckless with the info.

Interesting that you two thought that having these bad domains made public was a negative thing. I’d like to hear why you think the public doesn’t have a right to know about some seriously bad sites? Whatever happened to information sharing?

I hear your point and completely agree, to a point. I’m all about sharing, but only with the ‘good guys.’ Many in the industry rely on information such as this to track these adversaries. Granted it’s a pretty poor indicator, but it is something rather than nothing. Now that this information is in the public domain, you can be sure as heck that the attackers will stop using those hostnames for C2. In a nutshell, releasing this information is bad OPSEC.

I’ll side with Brian on this one, with the caveat that information should be vetted for release to the public.

Some information would serve a good purpose by being released to the public. Perhaps if these hostnames for seriously bad domains were completely and frequently promulgated to a public who would take notice of them and avoid any dealings with them, this strategy would become useless to the bad guys. Sure, they would probably come up with something else, but their sort of dirty business only truly flourishes in darkness. Shine a spotlight on them and it will only be that much harder for them to operate.

But there is no doubt that some information would be better kept private. Decisions of what should be public and what should be private would have to be made on a case by case basis.

I’d also like to know where to get the notifications for US-CERT EWIN’s. I’ve been subscribed to US-CERT’s alerts for a while, but haven’t found anything on EWIN.

One can only hope that comparable counterattacks happen from time to time–i.e. US government and corporate probes and attacks on the PRC.

A working assumption: the public has no way to know if such attacks occur, or whether or not the attacks succeed. Because the PRC doesn’t make this information public, and works very hard to suppress it. And the US govt reciprocates in self-interest.

Is this assumption valid?

I am sure you correct probably every nation state with the capability is playing this game right now to be ready for a counterstrike if any serious assets are attacked (such as a power grid or financial backbone)

Brian

With regards the APT angle, it was RSA themselves that said they had analysed the attack as being within the class of APT.

So all subsiquent comment on APT is down to RSA not to others making assumptions.

It may simply be an attempt by EMC RSA to put spin on the attack to make it sound like it was “elite nija attackers” that are impossible to stop rather than their own negligence.

Personaly my money is on negligence based on the little information available and RSA’s behaviour.

If as many suspect it is the “seed database” that has been taken along with information pertaining to ownership of the individual tokens then you have to ask,

‘Why on earth was this on systems that were connected directly or indirectly to networks accessible from a public network?

The bottom line is that SecureID did not fail and is not vulnerable if deployed properly. PEOPLE failed. The human element is and always will be the weakest link. You can see by the detail of the attack, http:www.v3.co.uk/v3-uk/news/2039746/rsa-details-secureid-attack-methodology, that it was social engineering that was the success. Without users doing things they were not supposed to be doing or being tricked into doing this never would have happened.

I overheard two people talking the other day about how they hated logging into a site because they were required to change their passwords every 60 days and could not use any of the previous 5 passwords. The other person then replied, well you know that once you change it, you can instantly change it back to the first password you ever used and you are good to go so you only need to change it once really.

So the bottom line is that technology is not the problem….people are the problem and any organization can spend millions and millions of dollars protecting their assets, but all it takes is 1 person to not follow policy, guidelines, procedure and training and it all falls to pieces.

@ T Roberts

“So the bottom line is that technology is not the problem….people are the problem and any organization can spend millions and millions of dollars protecting their assets, but all it takes is 1 person to not follow policy, guidelines, procedure and training and it all falls to pieces.”

I think you are absolutely right that people are frequently the weak link in otherwise bulletproof security systems.

And this begs yet again the dilemma that’s been with us since at least the dawn of the computer age. (Actually it’s been around since the invention of the wheel, but computers made it an ever-present part of our lives.) And the dilemma is: Are computers (and by extension, technology) here to serve people, or are people only here to serve the Machine?

If it is true that people are the weakest link in any security system, then shouldn’t a well-designed system take that fact into account and design around it?

If having to change your password every 60 days is so obnoxious that at least one person will find a way to not have to do it, shouldn’t that make the system designer see this as an inferior design option?

This does open a very big can of angry worms, but I’ll leave it at that.

People tend to be the weak link because the do not understand what the implications are of dodging around the system. We will never have a system that is capable of defending against all of the ways people can break it unless the system removes the person completely. The big thing that needs to be done is to close this loophole with GOOD training – what are the measures and WHY are they in place and what are the consequences to the overall system of not adhearing to them. All too often security training I’ve seen focouses on alot of policy and procedures that seem like something more for the IT admin and managment and doesn’t directly relate to the “lowly” end user. The end user is not made aware that by dodging the controls they are making it easier for the bad guys to get in.

prairie_sailor,

So what you are proposing is that we close the open link. Bring people back into the equation, and show them why these little nuisances truly serve them in the end.

That’s certainly a proposal worth considering.

But will it work? I suspect my security professional friends would think that it won’t.

I was a memeber of the Navy Reserve for 7 years. Each year we were required to watch a DoD IT security presentation. The presentatition talked alot about laws and directives and later versions even went through a kind of walk through of the hows to keep things secure and what to do if things were found that weren’t secure. The problem was that as reservists we don’t handle much for classified material so virtually all of my fellow reservists treated the training as a joke. The main failures of the training was that (1) it did not illustrate clearly how even us lowly reservists could be a starting point for attacks on the larger system. (2) It did not illustrate how such things as week passwords are easily compromised. (3) It did not illustrate methods of creating a strong password that could be easily remembered instead – like virtually all other IT security training I’ve seen it emphasized creating passwords that met certian complexity requirements (easily defeated with easily guessable patterns) and that the password not be written down. – no password management allowed. This thinking has to change and IT managers need to get more real and come back down the human level.

@prairie_sailor;

Interesting post, and I’ve seen that in some units. I don’t consider the Reserves lowly – I guess it depends on your mission and the unit attitude toward it.

I was in the Active Guard, and we took security very seriously; however we had top secrets because we were a nuclear unit. The internet was just starting; and documents were sent over POTS dedicated phone service, not the public digital switched network of today. Someone would have to have been tapping the line to gain anything, and be using the proprietary protocols and applications we had then, that no longer exist. I saw my first Unix virus, received by floppy disk in 1987, that arrived by mail from Ft. Lee, and it pretty much hosed our system for the entire field exercise.

Fortunately I had my own equipment(IBM MS-DOS), and drove on. Back then, we never put anything above medium security on a PC anyway. Everything was by TM manual, etc., stored in field safes, under 24/7 physical guard.

You’re correct to say this was a human failure. But i dont think we can say RSA SecureID is still secure.

The analysis of its protocol showed its inputs were a 64bit time value and 64bit secret. Due to derivation, time value only has 22bits of real entropy, which is brute forceable. They also refused to state how they generate the device specific secret. This is the critical part.

Clive Robinson suggested they might have a master seed or, Ill add, a weak way of coming up with the secret value. 22bit time plus weak random secret plus SecureID source code = game over. So, SecureID is only secure if the step RSA is quiet about is secure. If theres a weakness, it will be exploitable in semi real time. Reason ismodern hardware can easily catch up to a timing token that uses a 4bit 1Mhz CPU. 😉

Agreed but you actually had a key piece in your statement that is at the root of the problem, plus weak random secret. What can you do if your users have PIN that is 1234 or course that is easily hacked, and who created that? The end user.

ecurity is not meant to be easy or pleasant but far to often people do not do anything about it until it is too late because they do not want to be inconvenienced. Bottom line is that until people really understand the ramifications of weak security nothing will change

Haha, shouldn’t you be crahigng for that kind of knowledge?!

Interesting bit,

Probably Brian and the more advanced readers of this column are already aware, but I read yesterday on this website:

http://tweakers.net/nieuws/73624/rsa-hackers-verkregen-toegang-via-phishing-mails.html

(in Dutch) that the hackers got access to RSA systems via spear-phishing a selected group of employees with… an Excel file containing a 0-day exploit in an embedded .swf Adobe Flash file.

Which I think is the vulnerability about which Brian wrote here:

http://krebsonsecurity.com/2011/03/adobe-attacks-on-flash-player-flaw/

@Deborah Seriously you are using Zone Alarm 6.5. They are on version 9.2.102.000 for the free version. Update zone alarm or switch (because of there scareware tactics by there marketing people) You are using technology from several years ago. What do you think updates are made for, just useless time wasting activities so programmers can keep there jobs? Would love to know what version of flash and java you are using.

I think that some people just don’t update because of laziness and the fact that an interface might change.

Sorry Wrong article. Meant for Computer for rent article. Its late.

You made some nice points there. I looked on the internet for the topic and found most guys will agree with your blog.