A monster distributed denial-of-service attack (DDoS) against KrebsOnSecurity.com in 2016 knocked this site offline for nearly four days. The attack was executed through a network of hacked “Internet of Things” (IoT) devices such as Internet routers, security cameras and digital video recorders. A new study that tries to measure the direct cost of that one attack for IoT device users whose machines were swept up in the assault found that it may have cost device owners a total of $323,973.75 in excess power and added bandwidth consumption.

My bad.

But really, none of it was my fault at all. It was mostly the fault of IoT makers for shipping cheap, poorly designed products (insecure by default), and the fault of customers who bought these IoT things and plugged them onto the Internet without changing the things’ factory settings (passwords at least.)

The botnet that hit my site in Sept. 2016 was powered by the first version of Mirai, a malware strain that wriggles into dozens of IoT devices left exposed to the Internet and running with factory-default settings and passwords. Systems infected with Mirai are forced to scan the Internet for other vulnerable IoT devices, but they’re just as often used to help launch punishing DDoS attacks.

By the time of the first Mirai attack on this site, the young masterminds behind Mirai had already enslaved more than 600,000 IoT devices for their DDoS armies. But according to an interview with one of the admitted and convicted co-authors of Mirai, the part of their botnet that pounded my site was a mere slice of firepower they’d sold for a few hundred bucks to a willing buyer. The attack army sold to this ne’er-do-well harnessed the power of just 24,000 Mirai-infected systems (mostly security cameras and DVRs, but some routers, too).

These 24,000 Mirai devices clobbered my site for several days with data blasts of up to 620 Gbps. The attack was so bad that my pro-bono DDoS protection provider at the time — Akamai — had to let me go because the data firehose pointed at my site was starting to cause real pain for their paying customers. Akamai later estimated that the cost of maintaining protection against my site in the face of that onslaught would have run into the millions of dollars.

We’re getting better at figuring out the financial costs of DDoS attacks to the victims (5, 6 or 7 -digit dollar losses) and to the perpetrators (zero to hundreds of dollars). According to a report released this year by DDoS mitigation giant NETSCOUT Arbor, fifty-six percent of organizations last year experienced a financial impact from DDoS attacks for between $10,000 and $100,000, almost double the proportion from 2016.

But what if there were also a way to work out the cost of these attacks to the users of the IoT devices which get snared by DDos botnets like Mirai? That’s what researchers at University of California, Berkeley School of Information sought to determine in their new paper, “rIoT: Quantifying Consumer Costs of Insecure Internet of Things Devices.”

If we accept the UC Berkeley team’s assumptions about costs borne by hacked IoT device users (more on that in a bit), the total cost of added bandwidth and energy consumption from the botnet that hit my site came to $323,973.95. This may sound like a lot of money, but remember that broken down among 24,000 attacking drones the per-device cost comes to just $13.50.

So let’s review: The attacker who wanted to clobber my site paid a few hundred dollars to rent a tiny portion of a much bigger Mirai crime machine. That attack would likely have cost millions of dollars to mitigate. The consumers in possession of the IoT devices that did the attacking probably realized a few dollars in losses each, if that. Perhaps forever unmeasured are the many Web sites and Internet users whose connection speeds are often collateral damage in DDoS attacks.

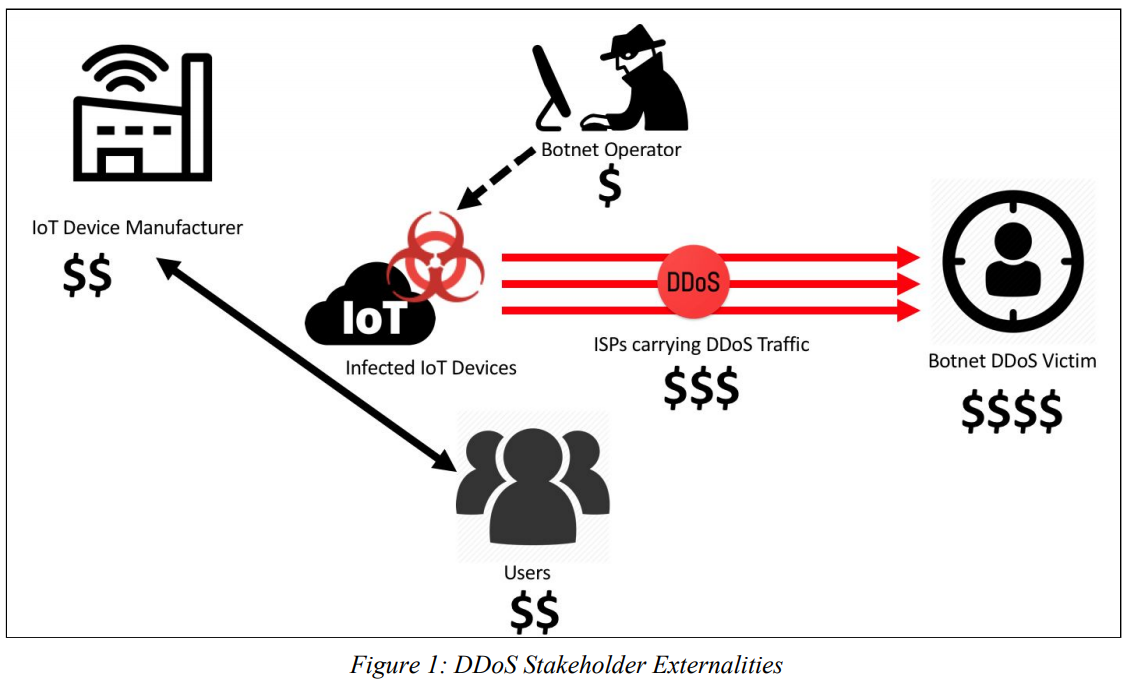

Anyone noticing a slight asymmetry here in either costs or incentives? IoT security is what’s known as an “externality,” a term used to describe “positive or negative consequences to third parties that result from an economic transaction. When one party does not bear the full costs of its actions, it has inadequate incentives to avoid actions that incur those costs.”

In many cases negative externalities are synonymous with problems that the free market has a hard time rewarding individuals or companies for fixing or ameliorating, much like environmental pollution. The common theme with externalities is that the pain points to fix the problem are so diffuse and the costs borne by the problem so distributed across international borders that doing something meaningful about it often takes a global effort with many stakeholders — who can hopefully settle upon concrete steps for action and metrics to measure success.

The paper’s authors explain the misaligned incentives on two sides of the IoT security problem:

-“On the manufacturer side, many devices run lightweight Linux-based operating systems that open doors for hackers. Some consumer IoT devices implement minimal security. For example, device manufacturers may use default username and password credentials to access the device. Such design decisions simplify device setup and troubleshooting, but they also leave the device open to exploitation by hackers with access to the publicly-available or guessable credentials.”

-“Consumers who expect IoT devices to act like user-friendly ‘plug-and-play’ conveniences may have sufficient intuition to use the device but insufficient technical knowledge to protect or update it. Externalities may arise out of information asymmetries caused by hidden information or misaligned incentives. Hidden information occurs when consumers cannot discern product characteristics and, thus, are unable to purchase products that reflect their preferences. When consumers are unable to observe the security qualities of software, they instead purchase products based solely on price, and the overall quality of software in the market suffers.”

The UC Berkeley researchers concede that their experiments — in which they measured the power output and bandwidth consumption of various IoT devices they’d infected with a sandboxed version of Mirai — suggested that the scanning and DDoSsing activity prompted by a Mirai malware infection added almost negligible amounts in power consumption for the infected devices.

Thus, most of the loss figures cited for the 2016 attack rely heavily on estimates of how much the excess bandwidth created by a Mirai infection might cost users directly, and as such I suspect the $13.50 per machine estimates are on the high side.

No doubt, some Internet users get online via an Internet service provider that includes a daily “bandwidth cap,” such that over-use of the allotted daily bandwidth amount can incur overage fees and/or relegates the customer to a slower, throttled connection for some period after the daily allotted bandwidth overage.

But for a majority of high-speed Internet users, the added bandwidth use from a router or other IoT device on the network being infected with Mirai probably wouldn’t show up as an added line charge on their monthly bills. I asked the researchers about the considerable wiggle factor here:

“Regarding bandwidth consumption, the cost may not ever show up on a consumer’s bill, especially if the consumer has no bandwidth cap,” reads an email from the UC Berkeley researchers who wrote the report, including Kim Fong, Kurt Hepler, Rohit Raghavan and Peter Rowland.

“We debated a lot on how to best determine and present bandwidth costs, as it does vary widely among users and ISPs,” they continued. “Costs are more defined in cases where bots cause users to exceed their monthly cap. But even if a consumer doesn’t directly pay a few extra dollars at the end of the month, the infected device is consuming actual bandwidth that must be supplied/serviced by the ISP. And it’s not unreasonable to assume that ISPs will eventually pass their increased costs onto consumers as higher monthly fees, etc. It’s difficult to quantify the consumer-side costs of unauthorized use — which is likely why there’s not much existing work — and our stats are definitely an estimate, but we feel it’s helpful in starting the discussion on how to quantify these costs.”

Measuring bandwidth and energy consumption may turn out to be a useful and accepted tool to help more accurately measure the full costs of DDoS attacks. I’d love to see these tests run against a broader range of IoT devices in a much larger simulated environment.

If the Berkeley method is refined enough to become accepted as one of many ways to measure actual losses from a DDoS attack, the reporting of such figures could make these crimes more likely to be prosecuted.

Many DDoS attack investigations go nowhere because targets of these attacks fail to come forward or press charges, making it difficult for prosecutors to prove any real economic harm was done. Since many of these investigations die on the vine for a lack of financial damages reaching certain law enforcement thresholds to justify a federal prosecution (often $50,000 – $100,000), factoring in estimates of the cost to hacked machine owners involved in each attack could change that math.

But the biggest levers for throttling the DDoS problem are in the hands of the people running the world’s largest ISPs, hosting providers and bandwidth peering points on the Internet today. Some of those levers I detailed in the “Shaming the Spoofers” section of The Democraticization of Censorship, the first post I wrote after the attack and after Google had brought this site back online under its Project Shield program.

By the way, we should probably stop referring to IoT devices as “smart” when they start misbehaving within three minutes of being plugged into an Internet connection. That’s about how long your average cheapo, factory-default security camera plugged into the Internet has before getting successfully taken over by Mirai. In short, dumb IoT devices are those that don’t make it easy for owners to use them safely without being a nuisance or harm to themselves or others.

Maybe what we need to fight this onslaught of dumb devices are more network operators turning to ideas like IDIoT, a network policy enforcement architecture for consumer IoT devices that was first proposed in December 2017. The goal of IDIoT is to restrict the network capabilities of IoT devices to only what is essential for regular device operation. For example, it might be okay for network cameras to upload a video file somewhere, but it’s definitely not okay for that camera to then go scanning the Web for other cameras to infect and enlist in DDoS attacks.

So what does all this mean to you? That depends on how many IoT things you and your family and friends are plugging into the Internet and your/their level of knowledge about how to secure and maintain these devices. Here’s a primer on minimizing the chances that your orbit of IoT things become a security liability for you or for the Internet at large.

WRT “When one party does not bear the full costs of its actions, it has inadequate incentives to avoid actions that incur those costs.” Nassim Nichols Taleb, author of a number of books including The Black Swan, Antifragile, Skin In the Game; writes extensively on this. He work is very applicable in cyber (crime), and is starting to speak in the cyber arena too. (http://www.fooledbyrandomness.com) Some of us are working on/researching cost figures of cyber (crime) using his work.

Pet peeve: “$323,973.75”

Since this is really just a wild assed guess, it would be nice if Berkeley emphasized this by using less precise figures, like “about $300,000.”

That was Krebs’ estimate using their online tool, which outputs a figure based on the data you input. Their papers actually says “the median cost is close to $300,000”.

It seems that we have come a long way some times then not really that far since the first telegraph or semaphore and now with the digital internet and so many digital devices, operating systems and software.

At the beginning of telephone Northern Electric was started to manufacture telephone so there might be uniformity. There is no real uniformity with all the digital devices and those using them are expecting they do everything for them, including protect them.

I used to use a user configurable firewall that was very good as it blocked everything in or out without permission, it also showed all attempts on my system. This firewall is now very old but I feel this is what we need on all of our devices, also to educate people like safe sex to protect themselves.

Maybe some will listen and others be the same as before.

It was Gilfoyl. lmao!

You’re a legend, Brian Krebs.

Keep up the good work!

I find it extremely hard to believe that someone would make an attempt to knock your blog online.

Nevertheless; you are a legend, Brian Krebs. Keep up the good work!

“online” – was there a misspelling?

I can believe it, because many of us couldn’t reach his site for days during this DDOS attack! He was definitely knocked OFFLINE.

I do remember being unable to access his site, yet I wouldn’t have guessed any intentional disruption to the blog.

Someone with a disliking to Krebs, perhaps?

You havent been paying enough attention then, lol. I believe this site was hit after Brian doxxed the owners of vDOS, which led to their arrest, or after he doxxed the creator of Mirai, which eventually led to his arrest. Im willing to bet there are quite a few criminals out there who dont like Brian.

The externality analogy is okay, but in my view that applies more to situations like Person A taking an action that hurts Person B without intending to do so. For example, I drive my car, which pollutes your air, hurting you. Driving my car creates an externality on you, but I am not intending to hurt you, and probably don’t know who you are.

I think a better analogy might be that of asymetric or hybrid warfare, where Combatant A (e.g. an insurgency) can attack Combatant B (e.g. an army) at low cost, because it attacks in limited unpredictable locations, uses unexpected strategies or weapons that are hard to counter, etc. Think of Iraq after the “Mission Accomplished” fiasco: small groups of insurgents with inexpensive weapons caused the American government to spend billions of dollars to defend thousands of US soldiers.

Just a thought, calculate the costs and access a treble damage fine on the miscreants. That would probably include the IoT device makers.

Bought a new router the other day, so I could handle the new fiber speeds. The technician thought I was crazy for changing the administrator side login password – Many of you know the one “admin” “password” – talk about idiots, those manufacturers need to make it easier for newbies to setup things like that – oddly enough, this manufacturer did supply its own user ID and generated password for the wireless but not the LAN Ethernet administration side.

My technician just couldn’t believe that malware can take over your router from the user side of the network!!! How many years has this been a problem??!! Sheeze, if he didn’t know this, how is Joe Sixpack going to figure it out??!!

Unsuprisingly, many router manufacturers ship their routers with a configuration of the sort you just described; which makes installation ‘easier’ for the average consumer, but is still a terrible security practice.

I’ve had a pretty good experience with the AirPort line of routers, manufactured by Apple; they’re fairly simple to install, but are coupled with considerable in-built security.

Many ‘firmwares’ pre-installed with routers are just down-right horrendous.

Just remember to disable WAN side administration in case the criminals find a backdoor or an OEM master key; and change the factory default password for the LAN side access.

Unfortunately, Apple has recently discontinued the AirPort line.

I read about this, and it’s very sad to me; but, since I’ve never had to repair (or service) one, I don’t plan to ‘replace’ my AirPort station any time soon.

I don’t know why, but for me, wireless routers only last me for about a year. I’ve bought cheap ones, I’ve bought moderately expensive ones. Still get about a year out of them. Until I bought an Apple Airport. And now they’re abandoning that product line. I just hope it lasts until they decide to start making them again. Thus far, it’s a good three years old and counting.

It’s quite odd. They’re plugged in to a UPS, and I’ve got fiber to the wall of the house, and no Ethernet in the walls of the house, so the routes for stray lightning to get in to the router is minimal, and no other electronics are getting blown out. Still, it was a regular occurrence over the last decade+.

Ne’er-do-well? Don’t you mean “miscreant?” Seriously, how can owners of the “things” tell when their thing is misbehaving?

Readily moderating network activity. and following his guide on how-to prevent such: https://krebsonsecurity.com/2018/01/some-basic-rules-for-securing-your-iot-stuff/

So Akamai provided you with a service until the time that you REALLY need it. They should thank you for allowing them to deal with something new. As it is, it just says their ddos protection is limited. While that may not be completely fair, if they think they won’t have the same problem again with a different client in the future they are probably wrong.

But the larger issue to me is that the ISP industry needs to get organized and find ways to deal with large scale problems. If the Mirai attack only used about 1/24 of the botnet at that time, just imagine what it’s full potential was then and may be now?

ISPs need to be able to deal with attacks like this. I see no reason why an attack on a site can’t be identified and then all or some bot traffic be redirected to 127.0.0.1 for a period of time. The traffic generated by the redirection could be a signal to upstream ISPs that there is a problem with local equipment.

At the kind of scale we are talking about (500Gbps+), all DDoS mitigation, regardless of company delivering it, is limited. The issue is not the devices for scrubbing data, but the peering points and peering connections between ISPs and network providers.

DDoS mitigation providers like to talk about “mitigation capacity”, but this is an aggregated capacity measured over dozens of peering points and many fibre connections. Attack traffic is not always spread evenly over peering points. There are bottlenecks.

A Cloud security provider like Akamai has far more peering and greater capacity than most ISPs to try and soak up the impact of a large-scale DDoS attack, but that scale of infrastructure costs money to build and maintain and cannot be built on the principle of offering unlimited mitigation to every customer at the same time, because the cost per customer would be prohibitive. No-one knows how a given DDoS attack will appear across the peering points. There may be geographical bias (eg. infection of Chinese language browsers) or attack blended with legitimate traffic (reflection attacks) – and so each one will stress a different range of peering points.

Many ISPs resort to black-holing target networks to protect their other paying customers, preferring to have one difficult conversation with the victim of a DDoS attack rather than hundreds of difficult conversations with all of their customers. However this is not satisfactory for businesses that cannot any outages at all.

Also relying on on-premise mitigation for first visibility of a DDoS attack can struggle when the connection from that site to the public Internet is flooded or disabled in and of itself. But identifying DDoS attack traffic from legitimate web-site traffic in an application attack, or when traffic is encrypted, might not be possible in the cloud.

Although you might not be able to see any reason why certain solutions to DDoS attacks would not be easy to implement, I can assure you that the industry is refining responses to the DDoS threat landscape continually, and that attacks and the defences against them are becoming much more sophisticated. At the moment, some attackers abilities to DDoS exceeds anyone’s ability to mitigate the impact. It’s not a technical or even a political issue, but an economic one.

Max – excellent illustration of the complexity of fighting big attacks. Even if defenders have really big pipes you still need to take into account the infrastructure those big pipes are connected to.

How about a federal 1% tax on any internet connected device or platform? The revenue could be held in escrow with some being used to pay huge bug bounties and fund security research/testing. If a company could provide a clean bill of health for a product after 10 years, they could get their taxes back.

Government should never be entrusted with public money, regardless of the purity of intentions. It will always find a way to waste or lose that money, by virtue of the complexity and size of government itself.

Great article as always, but I have a few nits to pick based on my two decades of working in IT security.

First of all is the assertion that “It was mostly the fault of [vendors]… and the fault of customers who bought these IoT things and plugged them onto the Internet without changing the things’ factory settings (passwords at least.)”

This is one of those things where you can be right and wrong at the same time, but even the part that’s right doesn’t matter.

Yes, people should be more IT security conscious. They should also save money, take care of their bodies. protect the environment, etc., etc., etc. These are based on *our* perceptions of what people should do, but different people have different priorities. Whether this is right or wrong or good or bad (even if such things are possible to determine objectively) it wouldn’t matter people people are going to do what they do anyway. Like the old military saying goes, you go to war with the army you have, not the army you want. In this case, we go to fight the security battles with the consumers and vendors we have, not the consumers and vendors we want. Whether we are right or wrong or they are right or wrong is irrelevant – the situation is what it is and we have to deal with it as it is.

We have about 2-3 generations of consumers taught that anything they buy is at least reasonably safe due to various consumer regulations and nanny-stating (leaving aside the moral and consequentialist arguments of whether this is good or bad or indifferent). This is combined with a fairly stark regression in critical thinking skills over the last two generations. It is unrealistic to expect consumers to develop habits for exercising any sort of security judgement in their purchasing decisions unless the costs of not doing so become both obvious and significant from their perspective. Right now they are neither, and don’t hold your breath waiting for that to change.

On the vendor side, we don’t even have a proven theory for how to develop “safe, secure” computing devices. As the IT sector matures and established vendors have had difficulty finding new ways to add value to customers, they have been pushed by private equity and Wall Street to subscription-based services that provide more reliable revenue streams and growth, at least in the short- to mid-term (I suspect this will eventually backfire spectacularly – it builds too many opportunities to undercut on pricing and purchasing terms). In some cases, vendors have found ways to deliver additional value in this model but many have not. In any case, this has strongly pushed the “continuous release” software / firmware model which in practice has been reducing software quality control at a time it’s needed most (“clearing minefields with cattle, and your users are the cattle” is the most apt description I’ve found of this). Another vendor factor is that courts have found very little to no liability for most software flaws – I’m not sure if there’s a productive way to address this; it is what it is and I’m just pointing it out. It’s very expensive to have a good software lifetime management system in place, and not all vendors have the margins and profits to allow this (especially at the low end). Addressing this would significantly increase prices for many IoT devices and probably eliminate many vendors from markets (and, in some cases, entire markets) in the short term.

I think that the only practical approach will be to assume that user behavior will not change significantly and that IoT software quality will largely remain a dumpster fire. This means we have to have additional layers and methods (security systems) to deal with these issues, and that these systems will really need to “up their game” both in terms of efficacy and accessibility to non-geeks.

One of the biggest problems in the field is that it is difficult for tools to replace protocols. In IT, you warm up a device with a checklist in hand and ensure that each step builds upon the security of the previous state so that you aren’t compromising previous or subsequent steps.

In userland, you plug-in a widget and *BOOM* you’re on the internet!

Just generating a secure warm-up checklist for a Raspberry Pi Zero (a $5 Linux computer) is a complicated pain-in-the-tuchis. Wouldn’t it be nice if it insisted on having a real firewalled login/password account before it enabled network interfaces?

Erik, I like the way you write sir.

Well stated Erik. I came here to say this but you did a far better job than I could have.

My view is that at this time the only way to insure that your network and IoT devices are not enslaved is to not own any! I refuse to subject my environment to these criminals and I will not enrich vendors that do not give a hoot what happens to others as a result of their cr…y products. Keep fighting Brian. Stick it in their eye whenever you can.

On a Raspberry Pi, you essentially have all the security tools that Debian has. You can make the little credit-card-sized device nearly as hardened as an international bank.

But it doesn’t ship that way. All Pi’s ship with the user “pi” and the password “raspberry” — and a frightening number of users plug-in to the internet without changing this.

A part of the problem is default passwords. What if IoT devices were shipped with no default password, i.e. with binary zeros in the password field in non-volatile storage? Further, the factory reset button sets that default password back to zeros.

If the setup process required each user to set a password, and the device DID NOT WORK until a password was set, then there’d be no more default passwords. By “not work” I mean the device should not perform its intended function until a password is set; cameras wouldn’t take pictures, routers wouldn’t route packets, etc. until a password is set.

To be sure, there’d be a bunch of “password1” and “12345” but there wouldn’t be any more default passwords. That won’t solve the problem, but it ought to mitigate it somewhat.

Fwiw, I support this approach…

There are still risks, e.g. what if there’s an easy way to force a device to reset without credentials? Malware would end up using it. But, that’s just a bug and the standards (we don’t have any) should note that users must be authenticated or have physical access to reset a device.

Another thing that would help is a model where devices always update. Today, most devices never update. I have a phone running Android 7.x, there’s an update from the vendor to 8.0, but I may never get it because the carrier hasn’t made it available, and that’s a relatively good case (there is an update, I know about it, I could manually install it if I wanted to).

Back when email was new and exciting, I was taught that senders of spam had to be careful. Because, if someone sent spam, they could get the equivalent of a brick (many many answering messages) back in their own accounts.

Any way to do this now?

These days spammers use distributed systems. Since their control centers aren’t sending the spam directly, there isn’t much that can be done. And if there renting or stealing resources for the control centers, the people behind them can just pick new centers if someone does shut down the current ones.

The way to solve these problems is:

1. DMARC (SPF+DKIM) — more or less requiring all of these (and TLS, and proper certificates, and adopting other standards as they’re accepted.

2. Some level of Gray listing — i.e. if a domain hasn’t sent email to a user/domain, assume it’s suspicious.

3. Active prosecution of spammers/hackers.

The first one is probably best described as akin to requiring everyone all highway vehicles pass current emissions standards annually (where the standard is increased annually). We should do the same thing for drivers of actual cars too, but that’s the topic for a different blog.

The Internet was designed under a model with a mantra of “Be conservative in what you send, be liberal in what you accept”. That was great assuming that everyone was friendly and competent. But in the modern age, it turns out that neither are universal true.

What this means for consumers is that some systems would have to be effectively shunned from participating in Internet bad systems (including email delivery) unless they were able to pay for someone to perform frequent upgrades.

We’re slowly doing this in some areas. Browsers dropped support for “gopher:”, and are in the process of dropping support for “ftp:”. At some point, we’ll drop support for unencrypted “http:” for users (it may be necessary for captive bootstrapping). Browsers have dropped support for old encryption ciphers/strengths (e.g. 40-bit), and protocols (e.g. SSL1/SSL2/SSL3; TLS1.0 is being dropped early this year).

As someone who actually maintains a number of email servers, the work required to update them to current standards is somewhat painful, and some of our systems’ peers are even worse (e.g. using self-signed certificates). But, in the long run, I’d rather be forced to upgrade and be able to tell our peers that we’re sorry we can’t interchange email unless they upgrade.

I sure hope your Insurance Broker recommended Cyber Liability coverage with Bus. Interruption coverage! I fnot, talk to a good Lawyer.

[quote]If we accept the UC Berkeley team’s assumptions about costs borne by hacked IoT device users the total cost came to $323,973.95.[/quote]

Lets do like UC Berkeley. We take results, only select what is convenient and then project it on the world like. Almost 70% of the researched services did not have a data cap. But UC Berkeley does not care, they just project that 30% on all services to calculate some total cost. Now UC Berkeley can show off with a study. Ladies and gentlemen, here are the total costs.