–“Common sense always speaks too late.” — Raymond Chandler

A new study about the (in)efficacy of anti-virus software in detecting the latest malware threats is a much-needed reminder that staying safe online is more about using your head than finding the right mix or brand of security software.

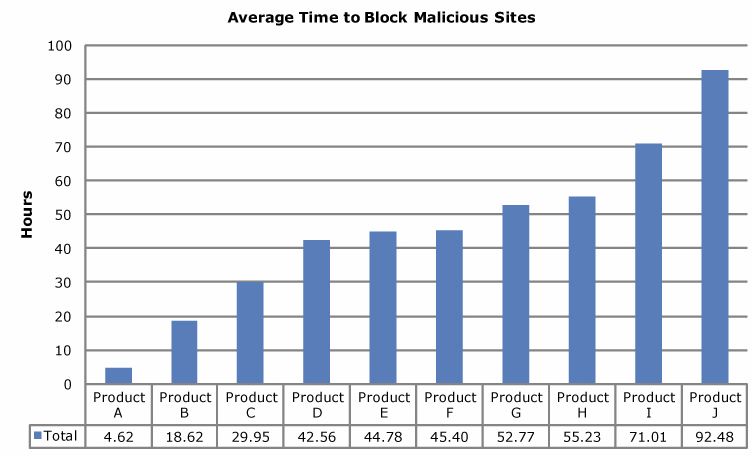

Last week, security software testing firm NSS Labs completed another controversial test of how the major anti-virus products fared in detecting malware pushed by malicious Web sites: Most of the products took an average of more than 45 hours — nearly two days — to detect the latest threats.

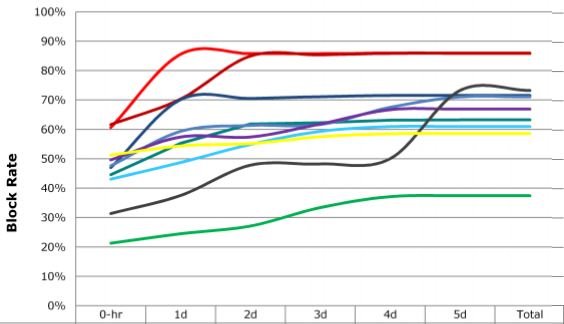

The two graphs below show the performance of the commercial versions of 10 top anti-virus products. NSS permitted the publication of these graphics without the legend showing how to track the performance of each product, in part because they are selling this information, but also because — as NSS President Rick Moy told me — they don’t want to become an advertisement for any one anti-virus company.

That’s fine with me because my feeling is that while products that come out on top in these tests may change from month to month, the basic takeaway for users should not: If you’re depending on your anti-virus product to save you from an ill-advised decision — such as opening an attachment in an e-mail you weren’t expecting, installing random video codecs from third-party sites, or downloading executable files from peer-to-peer file sharing networks — you’re playing Russian Roulette with your computer.

Some in the anti-virus industry have taken issue with NSS’s tests because the company refuses to show whether it is adhering to emerging industry standards for testing security products. The Anti-Malware Testing Standards Organization (AMTSO), a cantankerous coalition of security companies, anti-virus vendors and researchers, have cobbled together a series of best practices designed to set baseline methods for ranking the effectiveness of security software. The guidelines are meant in part to eliminate biases in testing, such as regional differences in anti-virus products and the relative age of the malware threats that they detect.

NSS was a member of the AMTSO until last fall, when the company parted ways with the group. NSS’s Moy said the standards focus on fairness to the anti-virus vendors at the expense of showing how well these products actually perform in real world tests.

“We test at zero hour, and we have a huge funnel where we subject all of the [anti-virus] vendors to the same malicious URLs at the same time,” Moy said. “Generally, the other industry tests are testing days weeks and months after malware samples have been on the Internet.”

David Harley, an AMTSO board member and director of malware intelligence for NOD32 maker ESET, didn’t quibble with the core findings in the NSS report, but rather what he called the lack of transparency in NSS’s testing methodology.

“My quarrel with NSS is that they’re trying to quantify that Product A is better than Product B on the basis of an uncertain methodology,” Harley said. “I’m not quarreling with the proposition that the industry misses a lot of malware. That’s incontrovertible, when every day we’re dealing with close to 100,000 new malware samples. In fact, that sort of level of detection that NSS is talking about — 50 to 60 percent right out of the gate — sounds realistic to me.”

For all of its hand-wringing about results from outside testing firms, the anti-virus testing labs are starting to move in the direction of more real-time testing, said Alfred Huger, vice president of engineering at upstart anti-virus firm Immunet.

“People have to understand that anti-virus is more like a seatbelt than an armored car: It might help you in an accident, but it might not,” Huger said. “There are some things you can do to make sure you don’t get into an accident in the first place, and those are the places to focus, because things get dicey real quick when today’s malware gets past the outside defenses and onto the desktop.”

I suppose that any evidence to demonstrate the need for common sense–and the ….(fill in the blank) … of relying on AV protection–should be welcomed.

Blocking malicious websites (or the nasty malware that comes from them) is only one window on that world.

I have used A-V comparatives for years. http://www.av-comparatives.org/

I do not know if their practice and reporting meets anyone’s standards–but I sure like the descriptions that group provides of the testing it does and the many different measures of performance they report.

… But the solution is to make the end point so secure (and keep it so secure) that the black hats give up – because there’s no reliable income stream in it.

Battling malware with spam filters at the end point is ridiculous. Scrambling around hard drives after malware is ridiculous. Make sure the end points aren’t used to propagate spam/malware and make sure the end points are immune to it anyway.

Well written post. So, how do you educate people on the issue of common sense online? Seems people are far too ignorant to security, and the risks they face.

Having more easy-to-use practical tests (and feedback) like the one below at SonicWALL would help. I use this frequently with ignorant friends and relatives.

http://www.sonicwall.com/phishing/

“Common sense is not so common.”

(attributed to Voltaire)

This lament is not a new one; I doubt there will ever be a solution.

‘I doubt there will ever be a solution.’

Of course. But that does not mean we should give up. We’re not allowed to give up. Ever. The attention Bk and others bring to such issues does make a difference.

I always understood “common sense” to mean “sense of the common people”. Ie: uneducated and superstitious.

How well educated are people who drive vehicles on public highways? Industries must always strive to make their products ‘foolproof’. There’s only one mainstream product today that’s close to foolproof and it certainly doesn’t come from Redmond. Don’t worry about educating people in security – educate them instead in what to buy.

Rick that “Was more to the point of the discussion”…knowing what to buy and why we need it has been thrown out for the bottom line….PROFIT….it obvious, but you said it…..

Thanx

Saying that we should stop educating people about how to protect themselves and instead telling them what to buy is like saying that we don’t need drivers education we should just teach them to buy Toyotas because they have a good crash saftey rating. Hmm – didn’t Toyota recently have some recalls becaus of probems that CAUSED crashes? Even the best built car will still require a driver educated in the rules of the road and how to operate it properly. Same with computers. Past performance is NOT a guarantee of future gains or losses.

“Saying that we should stop educating people about how to protect themselves”

Education is fine. Who can possibly be against education? But no matter how much education users get, it will still be impossible to avoid every risky online action. Even when the correct response is known, a human will eventually make a mistake. When even a single mistake can allow malware to infect the system, we have a system problem, not a human problem. Education cannot prevent human error.

“didn’t Toyota recently have some recalls becaus of probems that CAUSED crashes?”

Yet we do not see computer recalls, even though the design flaws which allow infection have been known for years, or in some cases decades. Where are the lawsuits against the hardware, software and system manufacturers who produce a product they know cannot resist malware? Why should users be at fault for a computer being demonstrably unable to protect itself in normal online use?

“Even the best built car will still require a driver educated in the rules of the road and how to operate it properly.”

If car safety required operator perfection, there would soon be many fewer drivers. And unless we really want to train and pay for professionalism in computer operation, the solution must be in the equipment, not the people.

If we just want equipment which is very difficult to invade and infect, we can do that now: Boot a LiveCD and run Linux online. Ideally, without a hard drive. But no! That is too hard! That is too complex! No, we want the old insecure system because…we just do! But if we think what we have is good enough, it seems odd to not hold the manufacturers responsible when that goes wrong.

It is possible to design and build more conventional computer systems to resist almost all infection. Flaws in older equipment are well known, yet new systems still have the same old problems. When do we start holding the manufacturers accountable?

Actually to design a system impervious to attack is to think of ALL possible ways to attack a system first. Any programmer worth his or her salt will tell you that while they might be able to think of common ways that their system might be attacked they can’t think of the way that hasn’t been invented yet. Linux is a good operating system but it is NOT impervious. Its just unattacked because there isn’t enough usage mass to make it a primary target. If Microsoft were to disappear tomorrow and Linux (or MAC OS) take its place – Linux would be the one in the hot seat (or Mac OS for that matter.)

@prairie_sailor:

“Actually to design a system impervious to attack is to think of ALL possible ways to attack a system first.”

But this is what I actually wrote:

“It is possible to design and build more conventional computer systems to resist almost all infection.”

Infection is not attack. Infection is far more serious than even a successful attack, because infection represents a hidden bot running session after session until the OS is re-installed. A mere attack by itself ends when the session ends if it cannot infect.

I feel that this is an imperfect anaology. We held Toyota accountable because their equipment was causing accidents. The hardware and software on my PC is not causing the infection, no matter if it is perfect at preventing them. The fraudster on the other end of the malware is the one causing the infection, it is my behavior allowing it.

Holding the hardware and software companies accountable for malware attackes is more like holding car companies responsible for drivers making mistakes behind the wheel. If you aren’t paying attention when driving, we don’t expect our automobiles to take evasive action for us. We get into our accident, and then rely on insurance companies to help us with the financial loss and to have the automobile repaired.

I think it’s fair to expect the industry to make advances in “Online Safety” much the same way car companies do, but to what extent? What should be considered reasonable?

@Ben:

“We held Toyota accountable because their equipment was causing accidents. The hardware and software on my PC is not causing the infection”

One could argue that Toyota cars are not causing accidents, it is just the trees and other cars that keep getting in the way. But we still hold Toyota responsible when a significant number of their cars get into trouble.

All products are designed to work in a particular environment: cars have roads and traffic jams, computers have network broadband and malware. Perhaps the better question is whether a product is fit for the intended purpose. Is our computer equipment and software really fit for the purpose of online banking? If so, then surely the manufacturers would be proud to guarantee it.

Whining about poor design is not our only option: Currently, I recommend booting from a LiveDVD for all online use. No, it is not optimal, but if we wait for something to fix the problems we will have a long wait.

“If you aren’t paying attention when driving, we don’t expect our automobiles to take evasive action for us.”

But human sight and brains are arguably suitable for controlling a moving vehicle. We can see what is around us, things move relatively slowly under a common physics, and we make the same sort of physical choices we make all day, every day. Almost all humans have extensive experience with movement, yet still occasionally get into trouble.

In contrast, when “driving” a computer, threats are generally invisible, unclear, and consequences occur at electronic speeds. Blaming the user for not performing well in this environment seems a bit over the top.

Online, all too frequently there are no danger signs. Yes, some things can be done to greatly improve security. No, there is no complete list, and not all choices are straightforward. If they were, the computer would be doing it already.

Computer education supporters would have users believe that if only users would learn the online danger signs, they would be safe, but that is false. That myth prevents us from moving along toward real security, because it assigns blame to users who cannot enforce safety, instead of the manufacturers who can.

If a car, when sitting in its normal environment, suddenly became undetectably dangerous to operate, would we blame the driver?

Do those who pay to access this information also receive the details about how the tests were conducted? That would be a critical part of the assessment. That red line showing nearly 90% block of bad stuff by end of Day One is certainly compelling. I’ll laugh to tears if it turns out to be Microsoft Security Essentials.

Also, does anyone else think its weird in the first chart that some of the lines dip back down?

it is weird that the lines dip back down. it’s either drawn free-hand (unlikely) or the person plotting the graph used software that tried to fill in blanks by making it fit some polynomial curve – which is typical of line graphs.

i’ve seen that behaviour before. using a continuous data visualization to represent discrete data underscores a failure to understand the distinction between the two and how that can result in misleading reports.

‘Do those who pay to access this information also receive the details about how the tests were conducted?’

If they didn’t then they’d hardly pay a second time.

You know, this was deemed to happen – Rogue AV Testers.

While Rogue AV Products make money through a false sense of security, Rogue AV Testers make money through a false sense of insecurity.

As a user, you have to pay NSS to see if your AV is any good. Obviously, the profits are maxed when all AVs look bad.

The Rogue AV Tester model will no doubt flourish, just like RogueAV’s have. It’s flawless.

I don’t think you have to pay them anything – unless you’re an AV vendor and already making money. If you’re just a user then you already know all you need to know: AV does not work, period, end of story, the fat lady’s left the building.

Name ONE scanning program which, in addition to scanning hdd’s, cdroms, usb thumb drives, and so forth,

also provides the following protections:

* AGP/PCI readable/writable firmware, scanning, detection and disinfection of malware

(includes graphics cards, sound cards, other cards including vanity usb devices for no useful purpose,

includes onboard network and graphics cards)

* BIOS scanning, detection and disinfection of malware

* Network card scanning, detection and disinfection of malware

One should’nt be boxed into booting into a livecd and navigate through a text based GUI to manually dump

their BIOS information and checksum it themselves. Oh, your BIOS offers a write protection feature?

Have you checksummed your BIOS lately? Oh, you use a livecd from antimalware company A,B,C, but do *any*

of them scan the devices I’ve mentioned?

How do you audit your AGP/PCI cards for malware? When is the last time you recall an antimalware product giving

you the low down on the state of your firmware for CD and DVD drives, graphics cards and network cards? Much

of the bad malware attacks these vectors and survives hdd/usb drive formats, reformats and zeroing, and what

can you do about it?

If you’re concerned about this, Google:

PCI rootkit(s) + trojan(s)

Network card rootkit(s) + trojan(s)

BIOS rootkit(s) + trojan(s)

For proprietary antimalware scanners, are you using Wireshark and dumping what is sent between your system

and theirs? Many contain rabid update checks and upgrades, but are they doing more? Are you forced into

sending in pseudo-anonymous information by default unless you opt out (does the program send back

information upon installation and init before you’ve had the chance to uncheck the option to opt-out?)

,or, as one unmentioned antivirus scanner offers: two choices but no opt-out solution, your hand is forced,

your program will report back various info whether you like it or not, unless you wall it off from the

network, but is your operating system proprietary and phoning home? Is the software and firmware for

your PCI cards proprietary, too?

Once you’ve educated yourself on the dangers of AGP/PCI, network card and BIOS rootkits, survey the

e-landscape of the internet for ONE product which offers protection of these devices, either in some form

of sandboxing or other freeze/halt in infection, or the simple feature of scanning these devices for

malware. Even if you can read several languages in order to try the many antimalware scanners from

every country, I doubt you will discover one which offers any protection of the real threat:

your *other* hardware devices.

Well, I’m waiting… Name one product! Good luck and “Stay thirsty my friends.”

Well, at least MBAM has a flash scanner which my research shows, is supposed to be able to scan flash drives and RAM – for example. Whether it checks the bios and/or PCI memory, I don’t know.

As an addendum; most experts report that simply disconnecting the HDD and re-flashing all on-board hard memory will blast any flash bugs to oblivion(using write protected USB flash drive or floppy). However then you have to put the hard drive in a dispensable PC for a good low level format and government wipe before reconnecting it to the original PC.

I read quite often, that folks get permanent infections on the HDD, and even using, DBAN, BART PE, or better, can’t get rid of the bug. I assume this is because the malware is using the check disk feature that flags sections of the disk as damaged and un-writable, and so therefore the drive controller will never over write it again. If one had a flash-able drive controller, I wonder if this would reset this condition. I’ve never read an example of this, however.

Best practice is to look at the wiped drive with Live CD linux, and if you see files left, just throw it away! Lime wire is your enemy! Even Mac books aren’t invincible to that.

“I read quite often, that folks get permanent infections on the HDD, and even using, DBAN, BART PE, or better, can’t get rid of the bug. ”

Do you have a link that you can share in relation to the “permanent infections” that can survive reformatting and wiping? I did a few Google searches, but I couldn’t find anything along that line other than MBR and boot sector infections.

Good post. While NSS Labs’ methodology and business model might be subject to questioning, there is no doubt in my mind about:

– the serious shortcomings of AV and AM for fighting today’s viruses and malware;

– the absolute necessity of having the user as part of the protection equation;

– the real difficulty of getting him (the user) there (the equation.

Well put Saad,

But that being said where does(UserA) go to get the most current and relvent information? regarding AM/AV products.

This is just a basic query from UserA….

Good article (as always)- but you can *buy* anti-virus. Common Sense is not common nor easily acquired.

As far as the testing, I have much more faith in NSS Labs’ people and methodologies than in most other labs. While much of their research is only available for purchase, a review of their free reports shows an attention to detail and awareness of the threat landscape others miss.

I don’t have much faith in NSS’s test: in this CORPORATE solutions study, they managed to test a RETAIL product…

Educating users is a difficult, long term and never ending task… and requires to manage to allocate a budget to this! To me, AV solutions should have some protections against bad decisions from non-techie users and also security rules in the network.

I quote /usr/games/fortune.

‘When you’re up to your hips in alligators, you forget the original project was to drain the swamp’

You’re killing off the AV industry and the platform they can’t protect, Bk!

I’m hoping we can move past the AntiVirus model (and it’s associated logical fallacy of “enumerating badness”) and look at better solutions such as Application Whitelisting instead (i.e. a security model that prohibits any and all executable code from running on a system unless it is explicitly marked as “allowed”).

@eCurmudgeon

“and it’s associated logical fallacy of “enumerating badness””

Thanks, I like that a lot. It is also implicit in the NoPrivacyOnline model too, I think – how many bits do you chop off an IP address before it’s anonymous, for example.

@Gannon: The notion of “enumerating badness” came from Marcus Ranum, who’s classic article, “The Six Dumbest Ideas in Computer Security” should be a mandatory read:

http://www.ranum.com/security/computer_security/editorials/dumb/index.html

I love the article:

http://www.ranum.com/security/computer_security/editorials/dumb/index.html

Every year or so I come across it and am always impressed.

The Internet security system we have has never worked, and there is no indication that doing the same things even harder ever will. No combination of firewalls and scanners can keep us safe. As long as a single user error can cause a semi-permanent bot infection we cannot find, no amount of eduction will be enough.

Our computer equipment has serious security flaws, some of which cannot be avoided. It is almost as though somebody has computer insecurity as their goal. That would not be new:

http://download.coresecurity.com/corporate/attachments/Slides-Deactivate-the-Rootkit-ASacco-AOrtega.pdf

Nevertheless, we *can* avoid the vast majority of online problems by booting a free Linux LiveCD on our existing equipment and using Firefox with security add-ons. We can do this on our own, in a few days, without waiting for a bank or brokerage or manufacturer or government to respond. We can use Linux online only, and then restart back into our normal system for off-line use.

Is booting a LiveCD too geeky for you? Is it too complicated? Do you prefer to wait for perfection? Fine. Do nothing. Wait.

Thanks, again. Just finding another Richard Feynman Fanboy was enough to make my weekend, but when your lips want to say ‘Yes, but’ and your brain says ‘shut up and read’ it’s a sure sign of a must read.

I wonder how many virtual trees were senselessly slaughtered in the past few days over the wrong (“default permit”) question: If one million people, out of 4.5 Billion on Earth buy an iPhone 4, how many hold them incorrectly ?

Common sense is a product of our experience and environment. Advertising is designed to alter our perceptions because we’re too busy to do our own critical thinking or research.

Everyday I teach my customers how their belief in the AV industry’s advertising is misplaced and has allowed hackers to gain access to their computers.

Hackers have picked up on this and now we have rogue security apps. What better way to infect a computer – create a problem, then throw a “solution” out that exploits the user’s beliefs in the “solution.”

The AV companies have a conflict of interest here – preventative practices are free (NoScript, Ccleaner, 3rd party PDF readers, etc.) and the only way to get even close to zero-day protection.

But with no marketing $$, preventative utilities are not on the user’s radar and users are left believing that software can stop these guys….WRONG!

@Gannon & @eCurmudgeon

marcus ranum is a smart guy, but he’s not infallible and on the matter of enumerating badness/goodness he had it wrong. data from whitelisting vendor bit9 shows goodness outnumbers badness by several orders of magnitude and is being created at a faster rate. in truth it’s difficult to imagine how the minority of programmers who are malicious could outproduce the majority who are not.

that’s not to say whitelisting isn’t a good idea, it is and i use it myself, but just because it doesn’t have the same tactical disadvantages as blacklists doesn’t mean it doesn’t have it’s own. chief among them being knowing what’s safe enough to add to the list (computer environments change over time) and recognizing what is an application in the first place (a more difficult problem than most people realize).

trading one rudimentary strategy for another isn’t going to help anyone in the long run. black and white are complementary and people should combine them.

Couldn’t agree more Kurt. Expert analysis is, well, analysis. Expert comparison is Journalism and an altogether different infallibility standard 🙂

I thought the idea was the goodness expected on a *single* computer was finite and tiny compared to the badness to which it could be exposed?

it’s true that the number of legitimate applications is much more manageable if you limit the scope to a single system, but consider what that means in practice.

someone has to generate the list of safe software that the whitelisting software uses. you either have a central body doing it (in which case they can’t limit their scope to a single system) or you leave it to the user of that single system (who is almost certainly worse at keeping badness off the list than a blacklist would be).

determining what’s safe enough to go on the whitelist is basically the inverse of determining what’s malware. if the user could do either of those things reliably s/he wouldn’t need software to help.

Whatever security software we create to secure PC and Internet, how can we protect ourselves from evil minds?

Even good websites are hacked to spread malware. Even a system is up to date, there will be an unknown vulnerability. (May be there will be an organization we can create to encourage hackers to find vulnerabilities and we can present them a million dollars!!!(like we spend to fight terrorism)

I agree with most of the comments here. But as far as this test goes I think it’s also a way to make money for the company There’s a thread over at the security form at dslreports that shows many security wonks agree with that.. Heck I wouldn’t pay for it. Now I do use an AV, and I do surf as a limited user. I also don’t click on every link I see.

You are certainly free to purchase whatever services you choose and to surf the internet with whatever level of protection you find comfortable. Others may not agree with your choices.

Nonetheless, why are you apparently suggesting that the company should provide its time, labour, and equipment to its subscribers without charge, motivated solely by altruism?

Now we come to the point of it – an infection is the very spcific action of taking control of an executable file and making the file do something it was not designed to to. A Trojan does not infect, it tricks the system into allowing it to do its dirty work by posing as something else – an ad for example that downloads malicious code is a trojan because it doesn’t take over any files, it simply inserts itself as another piece of software. That it does so by making another piece of software do something it wasn’t designed to do is the attack but not an infection because once the attack is over the software attacked goes back to being its normal self. Unless you’re God, Allah, Budah, or Jupiter, you’ll never be able to think of every possible means of subveting the code you write into doing something it isn’t supposed to. ALL software contains flaws – and I don’t believe any programmer intends for his software to be flawed. The only difference between good code and a secuity flaw is KNOWLEDGE of how it is flawed. To say that there is some magical piece of flawless software out there is just flawed thinking. – Unless you’re God.

“Now we come to the point of it – an infection is the very spcific action of taking control of an executable file and making the file do something it was not designed to to.”

But that is not quite right, is it? What about the Microsoft Windows registry? What about BIOS infections? I suggest that infection is better seen as whatever causes a malware to run on every session or boot. The infection itself is some form of stored code which will execute in each session. Rootkit technology will generally make malware files and processes invisible and beyond user control.

“A Trojan does not infect, it tricks the system into allowing it to do its dirty work by posing as something else – an ad for example that downloads malicious code is a trojan because it doesn’t take over any files, it simply inserts itself as another piece of software. ”

Why are we analyzing Trojans? In any case, how is it useful to distinguish between a Trojan which infects, and a Trojan which carries a payload which infects? In fact, there may be a carrier, a transient payload and the infection the payload causes. The payload may be code in a Microsoft Word document, or code in an .PDF file or code in a video file, none of which is just another piece of software. The infection can be a modification of OS files, or a device driver, or a file of almost any type whatsoever, which Microsoft Windows will see as executable.

“Unless you’re God, Allah, Budah, or Jupiter, you’ll never be able to think of every possible means of subveting the code you write into doing something it isn’t supposed to.”

You describe what is basically is the “Penetrate and Patch” philosophy, from:

http://www.ranum.com/security/computer_security/editorials/dumb/index.html

That is the philosophy which has gotten us where we are today. It does not work. If the goal is computer security, penetrate and patch is not the way to get there. Despite heroic effort, penetrate and patch will never find and correct all the faults in Microsoft Windows. After years of professional patching, the number of errors being patched is not trailing off.

“To say that there is some magical piece of flawless software out there is just flawed thinking.”

I never said that. Look it up.

What I actually wrote was:

“It is possible to design and build more conventional computer systems to resist almost all infection.” Which is transparently true, by way of working example.

In contrast to the virtual impossibility of finding every possible attack flaw, infection makes specific demands: An infection requires writable storage to hold the malware through power-down to the next boot-up or session. To prevent infection it is only necessary to eliminate such storage. Doing that completely should give an absolute guarantee of success in foiling infection.

In practice, a LiveDVD boot generally delivers a non-infect environment on current equipment. I use a Puppy Linux DVD online, generally with no hard drive at all. It works much better than one might expect. I am using it now. Articles on my pages give some background and start-up help.

In theory and rare practice, various hardware issues make infection possible even without a hard drive. Worries include the BIOS flash, the video card flash, the flashable DVD writer and the flash on PCI cards, etc. People claim to have actually encountered this sort of thing in the wild. Fixing a hardware infection can be extremely difficult, because even one remaining point of infection can quickly re-infect all others. Putting a component in another machine to re-flash it can infect that machine. There really is no excuse for this.

Current computer designs aid and abet infection by providing just what the malware needs, typically an easily-infected hard drive which stores and boots the OS. Fixes are not just around the corner. To avoid malware infection, learn to boot and use a LiveCD when online.

Firefox died while trying to upgrade from 3.6.3 to 3.6.4 (3.6.6 recently available) on my puppy. Upgraded fine on Windows but am stuck on 3.6.3 on puppy so I’m back to banking on Windows though I’ve yet to try SeaMonkey or puppy’s own browser. Can’t find a 3.6.6 pet package. Downloaded the linux version of Firefox but that’s a tar.bz2 file that I can’t figure out how to convert into a pet package. Google’s no help and it’s been frustrating. I think puppy’s author (Barry Kauler?) has done a heroic job but puppy isn’t for everyone especially mom-and-pop business owners who could benefit from it most. Who will help them with puppy problems when (not should) things go wrong?

First, I am not an expert on Puppy, even though I use it all the time (and am using it now).

I have had various irritating issues with Puppy, but finally reached the understanding which often happens with software, of avoiding the dangerous features. I would guess that fully 98 percent of my Puppy time consists of working in Firefox (like now).

Many of the problems I had at first were tied to the optical writer and type of disk I used. I tried the recommended CD-R’s for a while, with occasional disasters, and then various types of disk. In the end, I found DVD+RW’s to be much more reliable, at least in my systems, and, of course, re-usable as well. DVD+RW’s probably do require a later DVD writer design or firmware update than DVD-R’s, but my 3-year-old laptop uses them fine.

In my case, I have found it important to avoid the semiautomatic end-of-session DVD update, even if update is needed. Something about that seems to not handle my writers, which then damages the data on the disk. The work-around is to just say No at the end of each session, and use the Save button on the desktop for saving changes (which then causes an error on boot, which we can ignore). It is best to save a session soon after startup anyway. But the Save button is not available on the first session, so that just has to work and it is good not to get in too deep before trying it. I do try to wait for the DVD writer to find the disk and settle down before writing.

“Firefox died while trying to upgrade from 3.6.3 to 3.6.4 (3.6.6 recently available) on my puppy.”

OK, that is a disaster, but you can start over from scratch using the original .iso. You can download it into Temp and then burn a new start from Puppy. Also download the latest Firefox .pet you can find, and start there and upgrade. After an hour, maybe two, you should be back in charge.

Part of the disaster feeling probably is a loss of hard-won configuration. Personally, I make a text list of normally-open tabs using the Copy All URL’s add-on. With Edit / Copy All Url’s / copy the text is formed on the clipboard which I save in a LastPass Secure Note. Copying that text from the note and pasting it with Edit / Copy All URL’s / Paste will open and recover the tabs. This is lighweight bookmarking for recovery purposes. For real bookmarking I use Google bookmarks.

The larger issue may be that of collecting the lost add-ons, which can be a long list. There are tools for this: the add-on FEBE comes to mind but I do not like it. Some of the sync add-ons may do that also, and might be a good idea if not too intrusive. Currently, I just do it by hand. I first save the list of add-ons by using the Extension List Dumper add-on and saving the text as another LastPass Secure Note. That gives me the list of add-ons I need to recover by hand. That can take an hour or so.

“Upgraded fine on Windows but am stuck on 3.6.3 on puppy so I’m back to banking on Windows though I’ve yet to try SeaMonkey or puppy’s own browser.”

Start over. Set it up again. I know Firefox updating works in general, because it has worked for me many times. But Puppy is obviously not nearly commercial quality software, and users may have some trouble until they see what works. I wish there were a better alternative to recommend, but the ability to update the boot DVD is both extremely important and completely unique, as far as I know.

One advantage of using the Firefox platform for system-level activities (like configure and recover) is that most add-ons work both on Microsoft Windows and Puppy. That can make transitioning to Puppy much easier.

Hope that helps!

Re. “start over from scratch using the original .iso,” done that and FF still refuses to update to 3.6.6. What happens is verification of the incremental update fails, then it downloads the full update but it hangs up and never quits downloading even when network traffic goes to zero (Blinky stops blinking). It simply doesn’t know the download’s all done. Maybe FF’s update script is broken but this is the sort of problem that is painful with puppy. Puppy does not include FF extensions in burning new .isos so extensions have to be re-installed. Bookmarks and NoScript and RequestPolicy settings are no sweat, I have backups for those, but others might not. If you didn’t back them up, it’s a more painful recovery.

I was forced to save to USB stick because puppy won’t save to CD, and “just say No at the end of each session” is not available when saving to USB. Puppy never gives you any choice but automatically performs the USB save. Puppy also refuses to save to the same USB stick from a LiveCD-alone bootup if it already has a .sfs file on it. What happens is you go through all the steps for saving to the stick and at the very end a very brief “Session not saved” flashes on the screen just before power goes off. You look at the stick and you see the new .sfs file and you think puppy lied and it’s been saved, and when you try booting from the file, the password you’d given it will fail. It wasn’t saved properly.

If you resize a file system window and go up or down a directory level, the window resizes itself back to the original size — which you’d had resized in the first place because you *couldn’t* see what you’d wanted to see in the window. Ordinarily, not a huge problem but when coupled with its habit of opening windows that are partially off-screen and you’re digging through the files trying to find what lives where, it gets painful.

PupDial also retains ISP login credentials after you log off which, if you lose that CD, someone can use to log in into your account. Spin up the CD from any PC and someone’s good to go until you know to change passwords unless someone changes it first! I try to manually delete credentials before exiting PupDial but don’t always remember (the human factor in security failures).

Yes, when puppy works, I spend 99% of my time in FF happily surfing the net but when things go wrong, it’s 100% frustration because I’m not linux literate. Each of the above is individually trivial and fixable but they all add up to not-ready-for-prime-time for the average mom-and-op business owner. With all my grumbling, I’m still going to try to get back to some flavor of linux because the trojans are out there.

Re. “I wish there were a better alternative to recommend,” I think I’m going to give up puppy for now and try browserlinux which is a 4.3.1 puplet built around FF. Since it’s browser-centered, I think they’ll always have the latest FF built in (they already have a FF3.6.6 .iso released) which saves me the grief of updating FF should update fail in the future. I like it because it’s only 78MB and I never use much of what’s in puppy anyway. Since FF won’t update for me on puppy, the best I can do is get back to puppy+FF3.6.3 and be stuck there forever. With browserlinux, I get to puppy+FF3.6.6 right off the bat with a bit more work. Another option is xpud puplet but they’re currently at FF3.5.5 and it doesn’t look like they keep up with FF releases.

Wonder if BrianK can talk to one of these guys about creating a superlight .iso for banking. All you’d need is the latest FF, a screen grabber to snap bank transactions, simple text editor, pdf reader perhaps, and absolutely no installs. Fix the save problems and it’d be a coup!

You’re missing the point – Live CD’s aren’t impervious – just un-attacked YET. Anything who’s operating parameters can be modified can be attacked and often very successfully. While the code contained on a Live CD might not be able to be written to (provided its not an RW) the code actually operating in memory can.

This exact thing happend to Cisco with their 675 and 678 DSL routers a few years back. The CodeRed and NIMDA worms attacked those routers very successfully. I worked technical support at an ISP that used those routers at the time. Initially we didn’t understand what was happening. We would go through the process of rebooting the routers and the problem would be solved – for about 20 minutes and they’d be back on the support line with the same problems. We’d try re-configuring the routers and same thing 20 minuts they’d be back on the support line. – The worms couldn’t attach themselves to the firmware itself – but they could attack the operating memory. The ISP’s engineers developed some work arounds after about a week that mostly helped but the problems were not completely solved until Cisco identified and patched the firmware itself.

Common sense is not the answer – and neither is anti-virus software. The real problem is that we are relying on third party products to protect the operating system and detect compromise. We all know that anti-malware is only good against known threats, so is anyone surprised that it doesn’t work against unknown threats? I don’t think so.

Computers will never be secure until the hardware, operating system and applications are inherently secure. If you don’t think it’s possible, it’s because you’ve been brainwashed into believe it’s not possible.

There is something fundamentally wrong with current security paradigms and as a result, computing will never be secure. Everything needs to be re-engineered from scratch. Believe it.

@Mister Reiner:

“We all know that anti-malware is only good against known threats”

actually we know known-malware scanners are only good against known malware threats. anti-malware is an umbrella term that covers all techniques, some of which work against unknown threats.

“Computers will never be secure until the hardware, operating system and applications are inherently secure. If you don’t think it’s possible, it’s because you’ve been brainwashed into believe it’s not possible.”

no brainwashing here. study cohen’s academic work. malware is inherent to the general purpose computing paradigm. if you want to reduce computing to the level of non-programmable calculators (there’s been malware for the programmable kind) then you can eliminate malware – otherwise we will continue to have malware.

If people continue to believe that computing can never be secure, then it will never be secure. Some of the worlds toughest medical and technical challenges were overcome by those who believed that the impossible was possible. As the saying goes, “Can’t never could.”

Cheers

A heads-up: Firefox released another update.

Please modify the title to a more accurate one, from “Anti-virus is a Poor Substitute for Common Sense” to “Anti-virus can be a Fine Defensive Layer; Opaque Testing Methods and Misleading Result Graphics are not Productive for Anyone, but may be Profitable, Cheap Gimmickry for Salesmen”.

Maybe the title of the article could be modified to a more accurate one, from “Anti-virus is a Poor Substitute for Common Sense” to “Anti-virus can be a Fine Defensive Layer; Opaque Testing Methods and Misleading Result Graphics are not Productive for Anyone, but may be Profitable, Cheap Gimmickry for Salesmen”.

This really isn’t new. For years, people have known that A/V is not enough to protect systems. Corporations have been taking a bit longer to catch up. There are still many enterprises that rely solely on A/V for endpoint malware protection – which is foolish at best and possible even negligent. Because the press has picked up on the rash of intellectual property theft this has created more awareness about the malware problem, which in turn reveals that A/V is not enough.

Let me suggest something that might challenge some people – we can’t keep the bad guys out. They will succeed. Much like an immune system, we must let them in, learn about their methods, and then develop a set of indictors that can detect the intrusion. These indicators should be as close to the malware developer as possible, so to ensure long-term efficacy of the fingerprint. This increases the cost to the bad guy to stay undetected – it’s economics. When he comes back next week with a variant of the same, you have early detection – which translates to loss prevention in the board room.

Opinions on using “Sandboxie” anyone? We do in our home,and have so far been un-touched by anything malicious as far as we know. We also use Avast anti-virus and filseclab firewall. We know we are not bulletproof but are not hurting either:)

Sandbox protection is a good idea if it is properly configured. (There have been some problems with it on Windows 7, apparently.)

However, the problem with every protection program, whether it is a firewall, AV, Sandboxie, or anything else, is that you have no way of knowing whether you have sufficient security unless: either the program catches something and logs in the threat or notifies you; or you find your machine compromised, if something eludes your security. If your computer(s) are never attacked, you will never know.

I very much agree with two of your main points. Firstly that the commonsense of the user is important to security. PwC produced a new report earlier this month titled: “Turning your people into your first line of defence”, suggesting that users are an under-utilised security device. And secondly that the Anti Malware Testing Standards Organization requires serious scrutiny. I have an article ( http://bit.ly/dAqbpq ) suggesting that in its current form it should be disbanded. With no input from unaligned users it can never be considered unbiased.

Scroll up and click to read the user: minime’s post. It was neg repped down to hide it from view but it contains, most certainly, the most important information within this comment page. I’m sure most, if not all of the neg rep votes were from makers or stockholders of commercial antivirus companies.

There’s a deep secret they don’t want you to know: rootkits surviving hard disk drive formats and wipes, on firmware in your AGP and PCI cards and your BIOS. Proprietary firmware being manipulated with rootkits and trojans on connected devices overlooked by most if not all antimalware scanners.

When The Sony BMG rootkit first appeared, it was a rootkit scanner, later to be snatched up by Microsoft, which first detected the infestation, no antivirus or other antimalware scanners could pick this malware up.

Call me a conspiracy theorist as much as you like, and down rep this post to save your conscience from reading this, but I believe there is a vast collusion among antimalware companies to hide the discovery of serious malware infesting the AGP and PCI devices connected to your computer as well as the BIOS itself.

If you search long and hard enough on the web, you’ll discover many individuals puzzled over malware which persists on their computers and people labelling them as crazy or not knowing enough about computers and mocking these individuals.

One site to check is:

https://tagmeme.com/subhack/

our computer’s components are wide open to attack from malware, most of it being black box hardware and firmware to start with.

Research the following with Google:

“PCI rootkit”

“PCI rootkits”

“BIOS rootkits”

“BIOS rootkit”

“persistant rootkits”

“network card rootkits”

“network card rootkit”

The above search recommendations do not include, but should include, router rootkits. If you hang around enough black hat discussion forums and read posts and check code, you will come across people discussing how they run attacks against routers, many using simple exploits followed by breaking down the firmware for the router and/or replacing it.

Many network cards on PCs are widely exploitable and capable of much more than you would expect.

Those “in the know” will either down rep this post, attack it to some degree (use the “tinfoil”, “conspiracy”, or “crazy” label), or downplay the issue as nothing to be concerned about.

If you’re not concerned, you should be. Positive rep informative posts like minime’s so the knowledge is not lost because of the few who want this info suppressed.

There is not a single product, free or commercial which scans AGP, PCI cards such as your sound card and graphics card, BIOS, and network cards for these dangerous rootkits. I believe the companies want it this way, so the real malware persists on your network, for whatever dark reason they have for the exploitation. It is a fact many antimalware scanners *whitelist* certain malware, too, for a number of reasons.

Further homework:

* Shielding all cables on your system to prevent against leaks

* Switching from CRT to flatscreen monitors and shielding all cables to prevent against TEMPEST attacks

* Taking readings around your computer to spot and prevent leakage

Beware those who would continue to down rep these informative posts and keep you in the dark.

Once a system’s devices are exploited, no number of formats or wipes will aid you, your system will continue to deploy the infection.

As minime posted, we should not be forced to boot into a livecd like ultimate boot cd and navigate through an ancient text interface to dump and checksum our BIOS/CMOS information to verify whether or not an infection is present.

The papers are out there, written by intelligent people, locate them and treasure the knowledge on protecting your system, as much as you can.

When is the last time you verified your sound card’s firmware?

When is the last time you verified your graphics card’s firmware?

Do you know how? Is this ability hidden from you?

Challenge yourself to know more, by searching for the info I’ve highlighted in this post.

Pay careful attention to posts which are hidden via negative reps, it just might be someone or some people wish to hide from you powerful information on protection of your system, and, like in the dark ages, knowledge.

Scroll up and click to read the user: minime’s post. It was neg repped down to hide it from view but it contains, most certainly, the most important information within this comment page. I’m sure most, if not all of the neg rep votes were from makers or stockholders of commercial antivirus companies.

There’s a deep secret they don’t want you to know: rootkits surviving hard disk drive formats and wipes, on firmware in your AGP and PCI cards and your BIOS. Proprietary firmware being manipulated with rootkits and trojans on connected devices overlooked by most if not all antimalware scanners.

When The Sony BMG rootkit first appeared, it was a rootkit scanner, later to be snatched up by Microsoft, which first detected the infestation, no antivirus or other antimalware scanners could pick this malware up.

Call me a conspiracy theorist as much as you like, and down rep this post to save your conscience from reading this, but I believe there is a vast collusion among antimalware companies to hide the discovery of serious malware infesting the AGP and PCI devices connected to your computer as well as the BIOS itself.

If you search long and hard enough on the web, you’ll discover many individuals puzzled over malware which persists on their computers and people labelling them as crazy or not knowing enough about computers and mocking these individuals.

One site to check is:

tagmeme dot com forwardslash subhack

our computer’s components are wide open to attack from malware, most of it being black box hardware and firmware to start with.

Research the following with Google:

“PCI rootkit”

“PCI rootkits”

“BIOS rootkits”

“BIOS rootkit”

“persistant rootkits”

“network card rootkits”

“network card rootkit”

The above search recommendations do not include, but should include, router rootkits. If you hang around enough black hat discussion forums and read posts and check code, you will come across people discussing how they run attacks against routers, many using simple exploits followed by breaking down the firmware for the router and/or replacing it.

Many network cards on PCs are widely exploitable and capable of much more than you would expect.

Those “in the know” will either down rep this post, attack it to some degree (use the “tinfoil”, “conspiracy”, or “crazy” label), or downplay the issue as nothing to be concerned about.

If you’re not concerned, you should be. Positive rep informative posts like minime’s so the knowledge is not lost because of the few who want this info suppressed.

There is not a single product, free or commercial which scans AGP, PCI cards such as your sound card and graphics card, BIOS, and network cards for these dangerous rootkits. I believe the companies want it this way, so the real malware persists on your network, for whatever dark reason they have for the exploitation. It is a fact many antimalware scanners *whitelist* certain malware, too, for a number of reasons.

Further homework:

* Shielding all cables on your system to prevent against leaks

* Switching from CRT to flatscreen monitors and shielding all cables to prevent against TEMPEST attacks

* Taking readings around your computer to spot and prevent leakage

Beware those who would continue to down rep these informative posts and keep you in the dark.

Once a system’s devices are exploited, no number of formats or wipes will aid you, your system will continue to deploy the infection.

As minime posted, we should not be forced to boot into a livecd like ultimate boot cd and navigate through an ancient text interface to dump and checksum our BIOS/CMOS information to verify whether or not an infection is present.

The papers are out there, written by intelligent people, locate them and treasure the knowledge on protecting your system, as much as you can.

When is the last time you verified your sound card’s firmware?

When is the last time you verified your graphics card’s firmware?

Do you know how? Is this ability hidden from you?

Challenge yourself to know more, by searching for the info I’ve highlighted in this post.

Pay careful attention to posts which are hidden via negative reps, it just might be someone or some people wish to hide from you powerful information on protection of your system, and, like in the dark ages, knowledge.

@grub2

While I don’t dispute the existence of malware that is capable of infecting BIOS and firmware in hardware devices, I do question how prevalent it really is and what its general purpose would be. Typically malware’s primary purpose is monetary. I’ve been using computers for 14+ years on various networks and hardware equipment and have yet to experience any monetary loss or compromise of any kind. Either this type of malware simply didn’t exist on all those networks and equipment or its primary purpose is something other than monetary (espionage by the likes of the CIA/NSA etc.). Logic says the former.

And as to shielding cabling and TEMPEST attacks? Seriously? If you have to go that far, what do you have that is so important and who wants it? Are you trading in national security secrets or something other worldly nefarious? All this stuff sounds like something off Coast to Coast AM. Albeit, a very interesting and entertaining show. 😛

In the battle malware and antiviruses, antiviruses always lose because they are with a step behind.Very nice report anyway.

nice info…thanks.