Last month’s post examining the top email-based malware attacks received so much attention and provocative feedback that I thought it was worth revisiting. I assembled it because victims of cyberheists rarely discover or disclose how they got infected with the Trojan that helped thieves siphon their money, and I wanted to test conventional wisdom about the source of these attacks.

While the data from the past month again shows why that wisdom remains conventional, I believe the subject is worth periodically revisiting because it serves as a reminder that these attacks can be stealthier than they appear at first glance.

The threat data draws from daily reports compiled by the computer forensics and security management students at the University of Alabama at Birmingham. The UAB reports track the top email-based threats from each day, and include information about the spoofed brand or lure, the method of delivering the malware, and links to Virustotal.com, which show the number of antivirus products that detected the malware as hostile (virustotal.com scans any submitted file or link using about 40 different antivirus and security tools, and then provides a report showing each tool’s opinion).

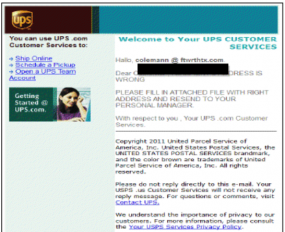

As the chart I compiled above indicates, attackers are switching the lure or spoofed brand quite often, but popular choices include such household names as American Airlines, Ameritrade, Craigslist, Facebook, FedEx, Hewlett-Packard (HP), Kraft, UPS and Xerox. In most of the emails, the senders spoofed the brand name in the “from:” field, and used embedded images stolen from the brands being spoofed.

The one detail most readers will probably focus on most this report is the atrociously low detection rate for these spammed malware samples. On average, antivirus software detected these threats about 22 percent of the time on the first day they were sent and scanned at virustotal.com. If we take the median score, the detection rate falls to just 17 percent. That’s actually down from last month’s average and median detection rates, 24.47 percent and 19 percent, respectively.

Unlike most of poisoned missives we examined last month — which depended on recipients to click a link that takes them to a site equipped with an exploit kit designed to invisibly download and run malicious software — a majority of attacks in the past 30 days worked only when the recipient opened a zipped executable file.

I know many readers will probably roll their eyes and mutter that anyone with half a brain would know that you don’t open executable (.exe) files sent via email. But many of versions of Windows will hide file extensions by default, and the attackers in these cases frequently change the icon associated with the zipped executable file so that it appears to be a Microsoft Word or PDF document. And, although I did not see this attack in the examples listed above, attackers could use the built-in right-to-left override feature of Windows to make a .exe file look like a .doc.

Obviously, a warning that the user is about to run an executable file should pop up if he clicks a .exe file disguised as a Word document, but we all know how effective these warnings are (especially if the person already believes the file is a Word doc).

There was at least one interesting attack detailed above in which the malicious email was booby-trapped with an HTML message that would automatically redirect the recipient’s email client to a malicious exploit site if that person was unfortunate enough to have merely opened the missive in an client that had HTML reading enabled. Many Webmail providers now block rendering of most HTML content by default, but it is often enabled or users sometimes enable it manually on email client software like Microsoft Outlook or Mozilla Thunderbird.

A copy of the spreadsheet pictured above is available in Microsoft Excel and PDF formats.

I would be interested in seeing what the common user experience is to get infected with one of these. Are infected users using Outlook or browser-based email? Do they get warnings when attempting to execute the unsigned executables? How many, how stern?

In my limited experience, I’ll see these things come to my Yahoo! email account, and the associated Norton scanning that Yahoo provides will blindly proclaim the attachment safe – its AV scanning is so ineffective, taking 3 or more days before it’ll recognize these attachments as a threat. I’m wondering if Hotmail account users are probably having similar experiences.

With my Gmail account, I’ll never see them appear, even in the Spam folder.

With Outlook, you’re only as good as your AV product (outside of your own smarts, which a good portion of the computing population are lacking), which based on VT stats as mentioned in the article, aren’t very good in the critical first days of release.

You can’t really blame AV applications perse, crooks test their creations against the most popular applications specifically so heuristic detection will not pick up the content as malicious – so the malware has to be verified as malicious by researchers which takes time.

Perhaps you can blame the *marketing* of AV products but then again the average user is going to avoid a product that tells the truth (it won’t protect you against new threats for some period) and go for the product that makes the claims we’re all so familiar with (the user is protected full stop).

So what can you do?

You should never assume a file is clean unless it comes from a safe source such as a vendors website for example. You should certainly never assume a file is clean because multiple AV products don’t detect it as malware.

@EJ;

I can attest that Hotmail does an excellent job of blocking the images of all spoof emails I receive, (which isn’t often). The emails look just like HP or PayPal emails from legitimate sources, but Hotmail always manages to block the executable objects and images from these emails. So generally I already know something is wrong.

I did get fooled by just one that got through, a few years ago, when the filters didn’t work as well – but LastPass caught the fact that the URL didn’t match the site, so of course I couldn’t logon to the fake/phishing site. Needless to say, I rarely follow links in any email now, even from trusted sources. It is just as easy to simply navigate directly to the site to follow up on email subjects.

When I do follow such email, it is in my honeypot lab, and I’m looking for trouble!

Does GMail actually block the transmission of all .exe files [which can be a real pain …], or does it only block those .exe files that are actually labeled as an .exe file?

Just ran a test using explore.exe. Blocked with a file type of .exe, but allowed it to be sent with a file type of .exx.

I had similar experiences with an in-house e-mail system a few jobs back. The mail administrators blocked .exe and .zip files, maybe other types. The need to share .zip files was large, so everyone I worked with learned to share .piz files.

A social engineer could do the same, giving the recipient a reasonable excuse why .exx and .piz file need to be renamed before being opened. Or zip files with passwords, because the information is “confidential”, to further confound virus scanning and blocking.

I have to repeat what I wrote on the previous articles.

VT shows more or less only STATICAL detections. It usually does not show cloud results, it does not run all the heuristics, because the metadata about the sample are missing, the emulation may be not deep enough, again, because it’s missing the metadata, also sandboxing does not run.

Why should anybody care for the level of protection of statical detections and not taking the whole product protection in account? The wording of your sentences is hiding this – it basically just bashes avs, without saying that it’s not _real world scenario_ and I’m not sure that most of your readers are aware of that.

Counting the accurate, superexact numbers based on ridiculous assumptions is wrong.

See you next month with the exactly same comment. 😉

Okay, you have a point… but, most AV (in terms of installations) didn’t detect this stuff anyways – according to user reports to abusedesk. 🙁

@Brian:

So your “friends” are back again… Bredo > Grum > Cridex (I like Sophos and Fortinet still calling it Bredo) 😎

Btw: same picture in europe /w localized brands and language (DHL…), most times sending several mails per spamtrap each day… oO

Good to see you again Jindrich. I meant to ask you this earlier, but the last time we spoke you were arguing that the tests of products paid for by the industry put actual “live” detection rates at upwards of 90 percent.

Assuming you still believe that, so what, in your view accounts for the missing 70 percent? Heuristics?

Hi Brian,

yes, the combination of cloud prevalences, source url blocking, non-executable detections, metadata detections and/or possible behaviour analysis of sample run, all of these add up much higher – see also my reply to Neej.

I really can’t speculate how much higher, because this is a work of independent testers – and we are seeing over 90%+ detections in such test.

My beef with this reporting of yours is in the way how it’s written. The static detections are simply _WORST POSSIBLE_ results, not ‘usual results’ and if the static detections are 20% or something, it does NOT mean that 80% users which click the attackment will get infected. They won’t.

What’s the point of this stance you continue to take exactly?

Cloud data is irrelevant and largely a marketing exercise involving a buzzword.

Heuristics are also irrelevant unless you can evaluate it against detections that were actually malware and not false positives.

The points about metadata I don’t understand.

Sandboxing is a preventative measure and has nothing to do with detection rates.

You’re seriously mistaken if you believe that AV provides protection against new threats. “Professional” don’t waste their time spreading malware that is detected by heuristics (again, making your point superfluous and silly), it is tested against a large number of popular AV applications and not against VT.

Neej, your opinions are the exact reason why I protest.

So, point by point:

a) Cloud is not irrelevant. If the file has low prevalence, AV may set much harder heuristics on it. In static testing, and for bandwidth purposes, and also not to skew the prevalences this is not done on VT. Very relevant.

b) Heuristics are great – we can fine tune them in a way which makes av testing for bad guys much harder (but that also applies for VT). Very relevant.

c) Metadata. If I have “single executable in zip, coming from email”, I have strong point which I can feed to heuristics, making it much more paranoid. When I get the same sample from VT under filename sample.exe, these metadata are missing. Very relevant.

d) Sanboxing/behavior analysis can check the sample’s behaviour dynamically and then kill it, informing user. Very relevant.

The only way how to correctly test AVs against such threats is simply run the attachment on the real machine and then check if it came thru or not.

Checking this on VT is nonsense and it only manifests _WORST POSSIBLE_ results, not best results.

I assume you’ll attempt respond in as politically correct a manner as possible, hoping not to offend the good people at VT. But for all intents and purposes, there is absolutely no way to read your critique of VT without coming to the conclusion that from your perspective scanning files with VT is an utterly pointless enterprise.

Nope. I think VT is valuable service, but it must be used with knowledge while interpreting the results.

As I wrote somewhere else in this thread – VT does confirm the detection (or FP), but does not always confirm the miss (false negative) – exactly for the reasons I wrote.

So if on VT the detection rate is 20%, it DOES NOT mean that av on users machine will pass thru 80% of the infection attempts. That’s what I read in Brian’s article and that’s what’s wrong in Brian’s article.

If (as you argue) Virus Total scan detection rates shouldn’t be compared to actual AV apps (due to the inherent deficiencies of VT’s static file scanners), why should anyone waste their time with a VT scan?

This is what I get from your comments: At best, VT can help identify a false positive and “minimally” increase one’s confidence that a scanned file isn’t actually malicious. At worst, based on the mammoth gulf between Brian’s 17% detection rate and your 90% detection rate, VT is wholly inadequate arbiter of safe vs. unsafe and is most likely only providing its users with a false sense of security.

“why should anyone waste their time with a VT scan?”

virustotal is a useful *starting* point in investigating a sample. if it tells you it found something, that’s knowledge you didn’t have before.

if it tells you it didn’t find anything, that doesn’t mean there isn’t anything there, nor does it mean that the products it’s using wouldn’t have found something under other circumstances.

fundamentally, however, virustotal is geared towards enlightening you about samples, not enlightening you about anti-virus products. anyone who tries to infer something about AV products from virustotal results is making an egregious error.

“At worst, based on the mammoth gulf between Brian’s 17% detection rate and your 90% detection rate, VT is wholly inadequate arbiter of safe vs. unsafe and is most likely only providing its users with a false sense of security.”

virustotal is definitely NOT an adequate arbiter of safe vs. unsafe, and if anyone told you different they were filling your head with lies.

this is a classic problem of people with shallow knowledge passing on exaggerated and incorrect information about some security technology to others and that exaggeration taking on a life of it’s own.

“anyone who tries to infer something about AV products from virustotal results is making an egregious error.”

Well. I guess that’s a shot across Brian’s bow. Because that’s exactly what Brian has done in this article and the previous article.

yes, well, i guess it’s not the first shot:

http://anti-virus-rants.blogspot.com/2011/04/its-not-detection-rate.html

i hope he takes it as constructive criticism. it’s certainly not meant to be personal – and i’m sure he knows how to reach me if he feels it was.

“anyone who tries to infer something about AV products from virustotal results is making an egregious error.” I think this is an interesting debate, but I have to respectfully disagree with this take. For the simple reason that other AV tests available to the public run tests on clean images, up to date patches, that aren’t running a multiplicity of other products, applications, services, etc, which is rarely the case. There is no accounting for conflicting processes and applications, registry errors, and the like. Which leads to AV products having their capabilities diminished. Here is a typical scenario: a user on a PC that is a couple of years old has job to do, a deadline to meet, and a family they want to come home to for dinner. Their computer pops an error message, runs slow, crashes, whatever. The last thing they want to do is log a help-desk ticket and patiently wait for someone to get back to them to resolve the issue. They try to troubleshoot the problem and invariably will start tinkering with the AV settings – turning features and functionality off one at a time to try and “fix” their issue, complete their work and go home. Rarely do they go back and turn those items back on. I know because I literally see it every single day. I’m not saying this happens with every user or even a majority of users, but it does happen on a regular basis. In some cases AV is even the culprit or at least part of the issue and then you have administrators disabling or altering functionality as a default setting. If you have responsibility for protecting an enterprise there is tremendous value in knowing both delta points – worse case and best case scenarios because the reality will be that you have AV deployments in both the extremes with the average landing somewhere in the middle. Anyone who says otherwise, in my humble estimation, is out of touch with what is happening in the trenches. In any case, it’s kind of a silly point to quibble over. Until AV can deliver a six-sigma level of detection rate for zero-day threats in even best case scenario deployments the most you can hope for from your AV solution is that it can stem the tide of nasty stuff getting through.

@bubba: (had to reply to myself because there was no reply button on your comment – guess this has gone too deep)

testing orgs test under ideal circumstances because the metric they are interested in is what level of protection the product is *capable* of providing.

measuring what level of protection a product actually provides when misconfigured is a fools errand because there are too many different ways to misconfigure the products which consequently have too many different possible outcomes on the products’ protective capabilities.

furthermore, the configuration used by virustotal does not match any misconfigured environment you’re likely to see in a real user’s system (virustotal uses command line tools, how many users have you seen do that?) . so even if we were going to try to measure protection in a typical misconfigured environment, virustotal would STILL be a bad analog and not give us an accurate measure.

i reiterate, the people who make virustotal say you shouldn’t use virustotal for testing AV. they’ve been saying it for at least 5 years now. they, more than anyone here, know what is and is not an appropriate use for their service. why is it so hard for people to accept this?

Jindroush is correct, Virustotal is a poor measure of the effectiveness of av products. I used to work at Symantec, so I had access to internal and 3rd party tests of detection rates.

VT runs each file through the static file scanner provided by each AV vendor. However, 5-6 years ago many virus writers started focusing on rapidly mutating malware. So the AV companies, in turn, focused on non-static ways of detecting threats – heuristics, IPS, statistics looking at source (site reputation), frequency and other metrics (file reputation), spam filtering . . . . none of which are kicked off by submitting files to VT.

The point is, stop using Virustotal – to compare AV products. It’s like comparing the safety of cars by just looking at car size – you really need to look at air bags, braking distance, crumple zones . . . . to get the full picture.

Who’s comparing AV products?

Too bad those with even a full brain click on the executable links anyway. <_<

Jindroush approaches things from the AV vendor point of view. I understand where he is coming from, as I am co-founder of Sunbelt Software which built a new antivirus product from scratch (VIPRE). So perhaps the averages and the median may be too low, but even if they would hover around 50% instead of 20%, the detection rates are still appalling. It makes a very strong case for intensified security awareness training, as the end user is the weak link in IT security. Kevin Mitnick and I released such a course a few weeks ago.

Stu can you provide some more info on your security awareness training course?

Sure. Late last year there was an article in the Wall Street Journal about social engineering. Both Kevin and my new company were mentioned in the article so I just approached him about creating a course together. He had been wanting to do that for 10 year so over a period of 8 months I had the rare opportunity to work with him and get a brain dump of 30 years of hacking and social engineering. We distilled that into a course which we released a few weeks ago: Kevin Mitnick Security Awareness Training. Here is the link:

http://www.knowbe4.com/products/kevin-mitnick-security-awareness-training/

Warm regards,

Stu

Very nice. I especially like the simulated attacks. If you can find a way to send an electric shock to the employee when they click on the wrong thing, sales would skyrocket. 🙂

why are we paying attention at all to test results that use virustotal?

have we not gone over the fact that virustotal misrepresents av detection capabilities over and over again?

is it not enough for hispasec themselves to call that type of testing into question?

is the rule of thumb that “virustotal is for testing samples, not AV” really that hard to follow?

I’d not blame it on VT. It’s misinterpreted by others. Because the results mean much more “if you see detection, it’s detected”, but not “if you don’t see detection, it’s a miss”.

i don’t blame it on virustotal either, but the fact remains that virustotal’s results do not represent the true protective capabilities of the products it uses under the hood. hispasec themselves acknowledge this. i don’t understand why so many other people have a problem getting this.

I talk to real life cyberheist victims every week, companies that have lost well into six figures because some employee clicked a link they shouldn’t have. How many of these victims were running antivirus? All of them. Guess how many had detections when they infected their systems? None. I’m publishing a story later this week about a victim that clicked a link 4 days after it was emailed to her and her company’s AV *still* didn’t detect it.

I continue to look for and encourage several different, unbiased researchers who aren’t getting paid to do the kind of testing needed to see just how much attention any of us should pay to what the antivirus companies — or their apologists — say about anything. I think I’m close to making that happen with a similar month-long study of this same type of malware. Stay tuned.

For anyone interested, about two years ago I wrote about a study from NGS Software, which actually did do some of the testing I’ve been advocating, but on a shorter term scale. They found exactly the dynamic I describe and which is obvious to just about anyone who’s dealt with real-life infections.

https://krebsonsecurity.com/2010/06/anti-virus-is-a-poor-substitute-for-common-sense/

“Most of the products took an average of more than 45 hours — nearly two days — to detect the latest threats.

Some in the anti-virus industry have taken issue with NSS’s tests because the company refuses to show whether it is adhering to emerging industry standards for testing security products. The Anti-Malware Testing Standards Organization (AMTSO), a cantankerous coalition of security companies, anti-virus vendors and researchers, have cobbled together a series of best practices designed to set baseline methods for ranking the effectiveness of security software. The guidelines are meant in part to eliminate biases in testing, such as regional differences in anti-virus products and the relative age of the malware threats that they detect.

NSS was a member of the AMTSO until last fall, when the company parted ways with the group. NSS’s Moy said the standards focus on fairness to the anti-virus vendors at the expense of showing how well these products actually perform in real world tests.

“We test at zero hour, and we have a huge funnel where we subject all of the [anti-virus] vendors to the same malicious URLs at the same time,” Moy said. “Generally, the other industry tests are testing days weeks and months after malware samples have been on the Internet.”

—

You can say Virustotal is only 25 percent right, and that your products are 90 percent successful, but it’s your word against…what? A series of tests paid for by the antivirus industry and done by an industry that depends directly on those partners to survive is flawed at the start. When the rules are set and agreed upon by the antivirus industry about how the industry can be tested and how it can’t and what’s fair and what’s not, again that’s a fail. Don’t misunderstand me: I get why the industry competitors would agree this is all fair and in everyone’s best interest (the industry’s anyway), but you have to be blind to not see that these tests lack a certain amount of credibility, if not a critical amount — especially when their conclusions seem to fly in the face of what the rest of the world is experiencing.

If there is a point I’ve been trying to get across to readers, it’s not that antivirus is completely ineffective, but that users should learn to behave as though it is. And yet we still see major vendors marketing their products as “Total Protection.” It’s hard to imagine another industry whose entire business model is predicated on the fact that the product the customer is consuming is going to fail some unacceptably large percent of the time before it starts to work for the rest of them.

wow. the blogpost length of that reply, coupled with the fact that the original version of it never even mentioned virustotal (even though that has been my sole concern) makes me feel like i’ve struck a nerve.

if that’s the case then i’m sorry. as i said in another recent comment here none of this is meant to be personal.

i’m not going to argue that the true detection rates of products is 90% or greater – i don’t care because the very concept of a “detection rate” is obsolete in the face of whole-product testing that includes products’ generic defenses that are incapable of performing “detection”.

my sole concern up until now has been that virustotal cannot be used to infer anything about the quality of individual av products or of av as a whole. hispasec (the very people who make virustotal) have said that virustotal results can’t be used this way. they more than anyone should know what virustotal can and can’t do.

if you want to pay attention to or promote NSS tests then by all means do so. if you are faced with a choice between an NSS test any *any* test based on virustotal results, choose the NSS test. NSS may have methodological problems (all testing orgs do, none can ever be perfect) but when they were still part of AMTSO a review of their testing performed by AMTSO was very positive (save for a concern about testing only parts of a product rather than the whole product in it’s entirety), so an NSS test is almost certainly going to be orders of magnitude better than one based on virustotal results.

Although email is, in effect, hardly routed in one particular nation, I’d be interested to know if readers from Europe and the UK feel that information security threats differ from those in the US. Is it time we faced up to our differing standards?

SC Magazine is gathering your opinions in this 1 minute survey:

https://www.surveymonkey.com/s/informationsecurityineurope

I’ll look forward to hearing your thoughts,

Thanks!

Bottom line: Any malware distributor worth his salt has purchased most of the existing AV products, and tests his malware against all of them until it passes undetected. This is not rocket science.

There are even programs which will take a piece of malware, run it against an AV, check the AV rule being applied that detected the malware, and REWRITE the malware to evade the check! There was a demo at one of the infosec cons.

Sure, for large-scale spread malware, eventually it’s going to be detected – and then modified – and then detected, etc. until the malware writer quits and goes on to a new piece of malware.

But a TARGETED malware which is tested as per above is going to go undetected for just that length of time it takes to penetrate the target. And that makes AV – even with advanced HIPS – USELESS for that sort of risk.

The approach that must be taken there is to whitelist everything that has to run and block everything else. The problem with existing whitelist products is that they leave open major holes like allowing PowerShell scripts to run. I’ve seen a demo at a con which shows that whitelisting doesn’t always work due to flaws in existing products.

What needs to happen is that end users need the sort of visibility into their host machines that is recommended these days for networks, i.e., be able to see anything and everything, baseline everything, and thus know when something is out of whack. Power users could use tools like the various process analyzers and such to do this, but the average end user has nothing he can use without requiring a lot of technical knowledge.

So the average end user needs to have a much larger dose of paranoia that they currently evince.

Funny you should mention whitelisting. I just blogged about that, mentioning that I wrote a whitepaper about that recently.

“First of all, I have no dog in this fight, and no product to sell you. But I have seen the antivirus industry from the inside out, and I have paid a lot of attention to the Virusbulletin website for a long time.” You should have a look at the graphs of “malware” vs. “goodware” over the last 10 years, and read my whitepaper:

http://blog.knowbe4.com/need-to-protect-a-critial-machine-use-whitelisting-not-antivirus/

Warm regards,

Stu

Leaving alone the fact that Brian wrote about spamvertized malware and most of us might agree that there’s no reason to email executable files anyways “whitelisting” points dirctly to microsoft’s responsibility to certify third partie’s software, right?

AV is an old model anyway… I strongly feel like if you give the end user the opportunity to mess up their system it will happen, even ‘good’ websites get compromised often enough that you cannot count on simply not clicking risky links as a way to keep your machine clean.

I wish Microsoft would design their next version of windows or even a side version more like a kiosk – you build a gold image and keep it that way (including strong sandboxing and application whitelisting) which would go a long ways towards keeping end users more secure… there would still be memory/physical/network level threats to deal with but you’d think after 15 years of windows letting users mess up everything under the sun they would take steps to address it.

They did. Your proposal sounds like the old Steady State. MS dumped it with the advent of Vista. Third party software companies did a better job anyway.

Your idea also comes full circle to what Brian already suggested – which is the LiveCD. That is a golden image that cannot be compromised. Other session threats are not a problem upon reboot. If the bank’s web site is compromised, all bets are pretty much off – wouldn’t you say?

The problem with relying on heuristics is that once you can get even a relatively innocent program on a user’s computer, you have free rein to use social engineering to convince them to download the real payload and override all the AV program popups.

the real problem with relying on heuristics is that it only ever happens in people’s minds.

vendors aren’t relying on heuristics. they have heuristics, and when they work great, but when they don’t that’s not the only trick vendors have up their sleeves.

not everything gets stopped, nor is everything going to get stopped ever in the future. it’s just not going to happen. as you yourself mention, social engineering is still an avenue of attack and there is no technical protection that can stop people from being tricked into turning their protection off.

The only protection to prevent it is not giving them the ability in the first place.

i’m sure that will go over real well the next time a technical foul-up bricks systems and people can’t avoid it because they can’t turn off the errant protection software. taking control away from the machine owner just isn’t going to fly.

Human nature is to click things, you can spend 5 years trying to train people not to and still have it happen or just make it so if they click it will block anything that it might spawn from happening. CEOs are great at demanding a computer with no restrictions, they are also good at messing them up too.